Category | DevOps

Last Updated On 09/05/2025

Human Scalability of DevOps? What is that exactly?

While DevOps can work amazingly well for little engineering organizations, the training can prompt extensive human/authoritative scaling issues without the cautious idea and management. Let us first start with the definition of DevOps.

The term DevOps implies various things to various individuals. Before we jump into our speculation regarding the matter, we believe it's essential to be clear about what DevOps intends to most of the people.

Wikipediadefines DevOps as:

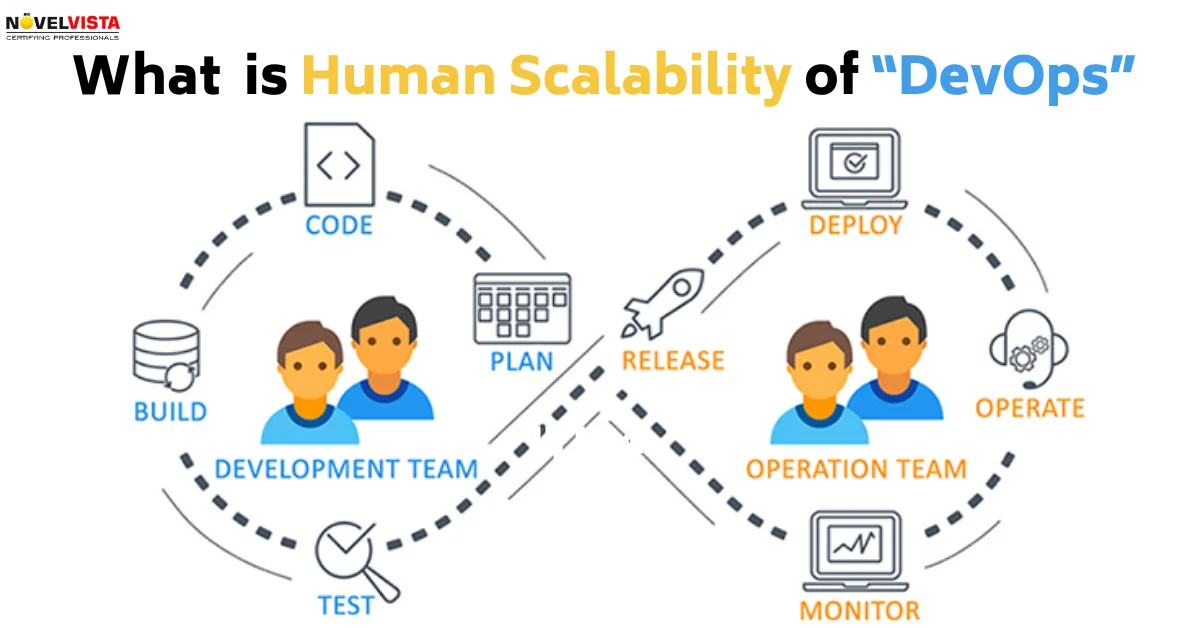

“DevOps (a clipped compound of “development” and “operations”) is a software engineering culture and practice that aims at unifying software development (Dev) and software operation (Ops). The main characteristic of the DevOps movement is to strongly advocate automation and monitoring at all steps of software construction, from integration, testing, releasing to deployment and infrastructure management. DevOps aims at shorter development cycles, increased deployment frequency, and more dependable releases, in close alignment with business objectives.”

DevOps is the practice of developers being responsible for operating their services in production, 24/7. This includes development using shared infrastructure primitives, testing, on-call, reliability engineering, disaster recovery, defining SLOs, monitoring setup and alarming, debugging and performance analysis, incident root cause analysis, provisioning, and deployment, etc.

The differentiation between the Wikipedia definition and our definition (an advancement theory versus an operational methodology) is significant and is encircled by the individual business experience of differentDevOpsspecialists. Some portion of the DevOps "development" is to present moderate moving "heritage" undertakings to the advantages of current exceptionally computerized framework and improvement rehearses. These incorporate things like: inexactly coupled administrations, APIs, and groups; continuous integration; small iterative deployments from master; Agile communication and planning; cloud-local versatile foundation; and so forth.

“For the last 10 years of my career, I have worked at hyper-growth Internet companies including AWS EC2, pre-IPO Twitter, and Lyft. Additionally, primarily due to creating and talking about Envoy, I’ve spent the last two years meeting with and learning about the technical architectures and organizational structures of myriad primarily hyper-growth Internet companies. For all of these companies, embracing automation, agile development/planning, and other DevOps “best practices” is a given as the productivity improvements are well understood. Instead, the challenge for these engineering organizations is how to balance system reliability against the extreme pressures of business growth, personnel growth, and competition.”

Over the past, roughly thirty years of what can be known as the cutting edge Internet period, Internet application advancement, and activity have experienced three particular stages.

The development of not employing committed activities staff in stage three organizations are fundamentally significant. Albeit, unmistakably, such an organization needn't bother with full-time framework heads to oversee machines in a colocation office, the sort of individual who might have recently filled such a vocation additionally regularly gave other 20% aptitudes, for example, framework troubleshooting, execution profiling, operational instinct, and so on. New organizations are being worked with a workforce that needs basic, not effectively replaceable, ranges of abilities.

DevOps works extremely well for modern Internet startups for a couple of different reasons:

Most new companies come up short. That is the truth. All things considered, any early startup that is investing a ton of energy making framework in the picture of Google is simply sitting around idly. We generally advise individuals to stay with their solid design and not stress over whatever else until human adaptability issues (correspondence, arranging, tight coupling, and so forth.) require a move towards a more decoupled engineering.

So what happens when a cutting edge (stage three) Internet startup discovers achievement and enters hyper-development? Two or three intriguing things begin occurring simultaneously:

Universally following the early startup stage, present-day Internet hyper-development organizations wind up organizing their designing associations correspondingly. This normal structure comprises of a focal foundation group supporting a lot of vertical item groups rehearsing DevOps (regardless of whether they consider it that or not).

The explanation the focal foundation group is so regular is that, as talked about above, hyper-development carries with it a related arrangement of changes, both with individuals and hidden innovation, and actually best in class cloud-native innovation is still too difficult to even consider using if each product engineering group needs to independently take care of basic issues around systems administration, discernibleness, organization, provisioning, reserving, information stockpiling, and so on. As an industry, we are several years from "serverless" advances being sufficiently vigorous to completely bolster exceptionally solid, huge scope, and realtime Internet applications in which the whole building association can to a great extent center around business rationale.

Along these lines, the focal framework group was destined to take care of issues for the bigger building association well beyond what the base cloud-local foundation natives give. Obviously, Google's infrastructure group is significantly degrees bigger than that of an organization like Lyft in light of the fact that Google is taking care of primary issues beginning at the server farm level, while Lyft depends on a considerable number of openly accessible natives. Be that as it may, the hidden purposes behind making a focal foundation association are equivalent in the two cases: theoretical however much framework as could be expected with the goal that application/item designers can concentrate on the business rationale.

At long last, we show up at the possibility of "fungibility," which is the core of the disappointment of the unadulterated DevOps model when associations scale past a specific number of architects. Fungibility is the possibility that all designers are made an approach and can do all things. Regardless of whether expressed as an unequivocal employing objective (as in any event Amazon does and maybe others) or made evident by "Bootcamp" like recruiting rehearses in which designers are employed without a group or job at the top of the priority list, fungibility has become a well-known part of current building theory in the course of the last 10–15 years at numerous organizations. Why would that be?

Be that as it may, this thought is taken to its extraordinary, the same number of more up to date Internet new companies have done, has brought about just generalist programming engineers being employed, with the desire that these (DevOps) specialists can deal with improvement, QA, and tasks.

Fungibility and generalist recruiting normally works fine for early new companies. In any case, past a specific size, the possibility that all specialists are swappable turns out to be practically crazy for the accompanying reasons:

Unexpectedly and deceptively, associations, for example, Amazon and Facebook organize fungibility in programming building jobs however plainly esteem the split (yet at the same time covering) range of abilities among advancement and activities by proceeding to offer distinctive vocation ways for each.

How and at what organization size does pure DevOps breakdown? What goes wrong?

At a specific engineering association size, the wheels begin tumbling off the transport and the association starts to have human scaling issues with an unadulterated DevOps model upheld by a focal framework group. We would contend this size is reliant on the current development of open cloud-local innovation and as of this composing is someplace in the low several all-out designers.

For organizations more seasoned than around 10 years, the site reliability or production engineering model has gotten normal. In spite of the fact that usage shifts across organizations, the thought is to utilize engineers who can completely concentrate on dependability building while not being under obligation to item administrators. A portion of the execution subtleties are exceptionally significant, be that as it may, and these include:

The accomplishment of the program and its effect on the general engineering association is frequently subjected to the responses to the above inquiries. In any case, I solidly accept that at a specific size, the SRE model is the main successful approach to scale a building association to various specialists past where an unadulterated DevOps model separates. Indeed, we would contend that effectively bootstrapping an SRE program well ahead of time of the human scaling limits illustrated in this is a basic duty of the designing initiative of a cutting edge hyper-development Internet organization.

Given the plenty of models as of now actualized in the business, there is no correct response to this inquiry and all models have their openings and resultant issues. We will diagram what we think the sweet spot depends on my perceptions in the course of the most recent 10 years:

Given the plenty of models as of now actualized in the business, there is no correct response to this inquiry and all models have their openings and resultant issues. I will diagram what we think the sweet spot depends on my perceptions in the course of the most recent 10 years:

Balancing Automation and Team Dynamics for Sustainable Growth

Very few companies reach the hyper-growth stage at which point this post is directly applicable. For many companies, a pure DevOps model built on modern cloud-native primitives may be entirely sufficient given the number of engineers involved, the system reliability required, and the product iteration rate the business requires.

For the relatively few companies for which this post does apply, the key takeaways are:

Modern hyper-growth Internet companies have (in my opinion) an egregiously large amount of burnout, primarily due to the grueling product demands coupled with a lack of investment in operational infrastructure. I believe it is possible for engineering leadership to buck the trend by getting ahead of operations before it becomes a major impediment to organizational stability.

While newer companies might be under the illusion that advancements in cloud-native automation are making the traditional operations engineer obsolete, this could not be further from the truth. For the foreseeable future, even while making use of the latest available technology, engineers who specialize in operations and reliability should be recognized and valued for offering critical skillsets that cannot be obtained any other way, and their vital roles should be formally structured into the engineering organization during the early growth phase.

Author Details

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.