Category | DevOps

Last Updated On 11/03/2026

Developers often face the same problem again and again: code works locally, but something breaks during testing or deployment. Releases get delayed, bugs appear late, and teams spend hours fixing issues that automation could easily handle. That’s exactly where CI/CD Tools change the game.

Modern development teams rely on CI/CD Tools to automate building, testing, and deploying applications. Instead of manually running scripts or deployments, these tools create automated pipelines that move code from development to production smoothly and safely.

The goal is simple: faster releases, fewer mistakes, and better collaboration between developers and operations teams.

In our DevOps automation workshops, many teams report reducing manual deployment effort by nearly 50% after introducing CI/CD pipelines for builds, testing, and environment provisioning.

Before diving deeper into the tools themselves, let’s quickly summarize the key ideas of this guide.

TL;DR Summary

Topic |

Key Insight |

|---|---|

What are CI/CD Tools |

Platforms that automate building, testing, and deploying software |

Why teams use them |

Reduce manual work, improve code quality, and speed up releases |

Key capabilities |

Pipeline automation, integration with Git, container support, monitoring |

Best CI/CD Tools |

Jenkins, GitHub Actions, GitLab CI/CD, CircleCI, Azure DevOps |

Advanced deployment tools |

Argo CD, Octopus Deploy, Spinnaker |

Key trend in 2026 |

89% of DevOps teams now rely on CI/CD pipelines |

With that quick overview in mind, it becomes easier to understand what CI/CD Tools actually do inside modern development workflows.

So first, let’s answer the question clearly: What are CI/CD Tools?

In simple terms, CI/CD Tools automate the process of building, testing, and deploying software applications. CI stands for Continuous Integration, while CD stands for Continuous Delivery or Continuous Deployment.

Together, they create automated pipelines that move code from development to production without manual intervention.

Continuous Integration (CI)

Continuous Integration focuses on automatically integrating new code changes into the main repository.

Every time developers push code:

The application is built automatically

Automated tests are executed

Code quality checks are performed

This helps teams detect issues early instead of discovering them during final releases.

Continuous Delivery / Deployment (CD)

Continuous Delivery focuses on preparing the application for release. Continuous Deployment goes a step further and automatically deploys code into production environments. With proper CI/CD Tools, teams can release updates frequently and safely.

Typical CI/CD pipeline stages include:

Code commit to version control

Automated build process

Automated testing

Security scanning

Deployment to staging or production

This automation is why organizations increasingly rely on Tools for CI and CD in their DevOps pipelines.

Without these tools, software delivery would be slow, error-prone, and difficult to scale.

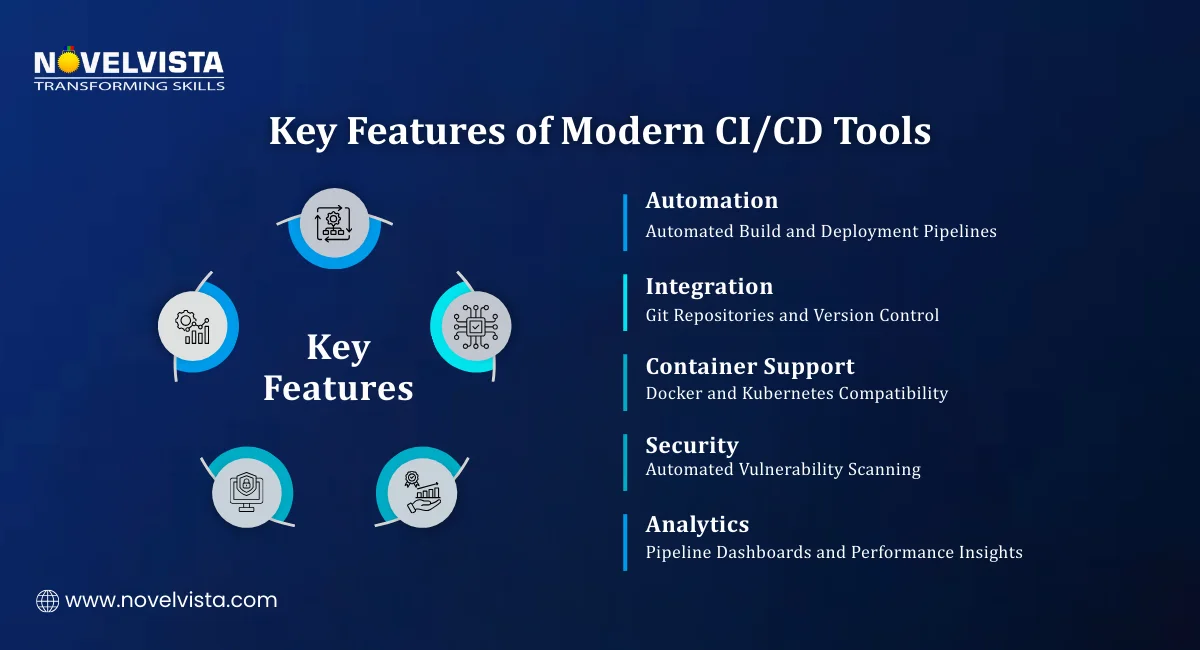

Not all CI/CD Tools are built the same way. Some are designed for simple pipelines, while others support large enterprise environments.

When organizations evaluate the Best CI/CD Tools, they typically look for several important features.

1. Version Control Integration

Most pipelines start with code stored in repositories like Git. Good CI/CD Tools should integrate smoothly with:

GitHub

GitLab

Bitbucket

This allows pipelines to trigger automatically whenever code changes are pushed.

2. Container and Kubernetes Support

Modern applications are often containerized. That’s why many Tools for CI and CD must support:

Docker containers

Kubernetes orchestration

Cloud-native architectures depend heavily on these technologies. Industry data shows that 92% of teams now require Kubernetes compatibility in CI/CD pipelines.

3. Automated Testing

Testing is one of the most important stages in CI/CD pipelines.

Strong CI/CD Tools should support:

Unit testing

Integration testing

Security testing

Performance testing

Automation ensures code quality without slowing down development.

4. Security and Compliance Checks

Security testing is increasingly integrated into pipelines. Many Best CI/CD Tools include features like:

Dependency vulnerability scans

Container security analysis

Code security validation

These capabilities help teams catch vulnerabilities early.

5. Monitoring and Pipeline Insights

Monitoring tools provide visibility into pipeline performance. Good dashboards allow teams to track:

Build success rates

Deployment frequency

Pipeline execution time

This visibility helps teams optimize delivery workflows. These features explain why selecting the right CI/CD Tools is important for modern DevOps environments.

In enterprise DevOps training programs, teams evaluating CI/CD platforms often prioritize integration with existing Git repositories and container environments before introducing advanced pipeline automation.

Modern DevOps teams rarely rely on a single platform. Instead, they combine multiple CI/CD Tools to automate development, testing, and deployment workflows.

The following section explores some of the Best CI/CD Tools widely used in 2026.

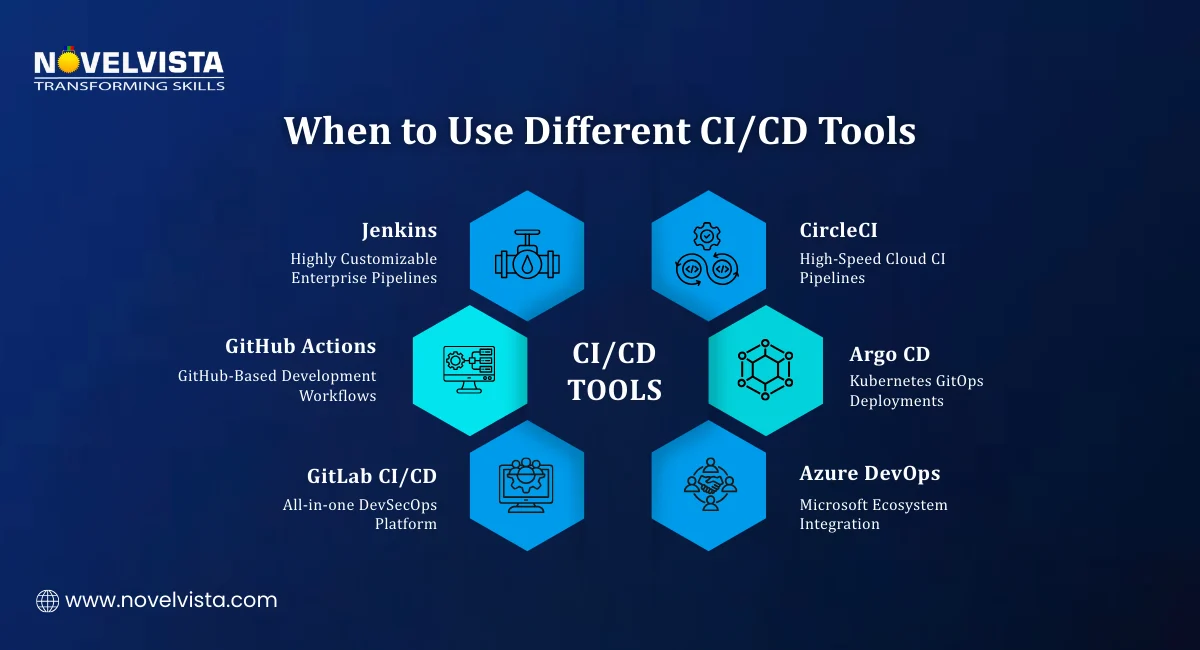

1. Jenkins – Open-Source CI/CD Automation Leader

Jenkins remains one of the most widely used CI/CD Tools in the DevOps ecosystem. It is an open-source automation server designed to create customizable pipelines. Many organizations choose Jenkins because it offers high flexibility.

Key capabilities of Jenkins

Supports 10,000+ plugins

Works with Docker and Kubernetes

Highly customizable pipeline configuration

Large developer community support

Because of its flexibility, Jenkins often appears at the top of most Best CI/CD Tools lists.

Why teams use Jenkins

Organizations prefer Jenkins when they need:

Custom CI/CD pipelines

On-premise deployment options

Full control over pipeline configuration

However, Jenkins also requires manual setup and maintenance. Despite that complexity, it remains one of the most powerful Tools for CI and CD available.

2. GitHub Actions – CI/CD Inside GitHub

GitHub Actions is another popular platform among modern CI/CD Tools. It allows developers to automate workflows directly inside GitHub repositories. Instead of using external systems, pipelines run within the same platform where the code is stored.

Key features of GitHub Actions

Native integration with GitHub repositories

YAML-based workflow configuration

Automated build and test pipelines

Built-in CI/CD capabilities

Developers simply define workflows in YAML files, and automation runs whenever events occur.

For example:

Code push

Pull request creation

Issue updates

This simplicity is why GitHub Actions is considered one of the Best CI/CD Tools for teams already using GitHub. During GitHub automation training sessions, teams often build their first CI pipelines using simple YAML workflows, typically automating build and test stages within the first day of hands-on practice.

3. GitLab CI/CD – All-in-One DevSecOps Platform

GitLab offers one of the most integrated CI/CD Tools available today. Instead of combining multiple platforms, GitLab provides:

Source control

CI/CD pipelines

Security testing

Deployment tools

All inside one platform.

Key advantages of GitLab CI/CD

Unified DevOps platform

Built-in security scanning

Auto DevOps pipeline configuration

Kubernetes integration

Because of this integration, GitLab appears frequently among the Best CI/CD Tools used by enterprises. Teams that want fewer tools and simpler workflows often choose GitLab for their pipelines.

4. CircleCI – Fast Cloud-Based CI/CD Platform

CircleCI is known for speed and efficiency. Many teams choose it because it can run builds very quickly using parallel execution and smart caching. Among modern CI/CD Tools, CircleCI stands out for its cloud-first design and strong performance when running container-based pipelines.

Key capabilities of CircleCI

Cloud-native architecture

Parallel job execution

Docker-based pipeline environments

Dependency caching to speed up builds

Performance insights dashboards

Why teams choose CircleCI

CircleCI works best for teams that deploy frequently and need quick feedback on builds. It is particularly popular in startups and fast-moving development teams because pipelines can run very quickly compared to traditional systems.

CircleCI is therefore considered one of the Best CI/CD Tools for teams focusing on high-speed automation.

5. Azure DevOps – CI/CD for the Microsoft Ecosystem

Azure DevOps is a complete development platform offered by Microsoft. It combines several development tools into one ecosystem.

Many organizations using Microsoft infrastructure adopt Azure DevOps as their primary platform for CI/CD Tools.

Core features of Azure DevOps

Azure Pipelines for CI/CD automation

Azure Boards for project tracking

Azure Artifacts for package management

Azure Repos for version control

Pipeline capabilities

Azure DevOps pipelines support:

Visual pipeline configuration

YAML-based pipelines

Integration with Azure cloud services

Multi-platform builds (Linux, Windows, macOS)

Because of these features, Azure DevOps often appears among the Best CI/CD Tools for enterprise environments.

Organizations already using Microsoft technologies find it easy to integrate pipelines directly into their cloud infrastructure.

6. Bamboo – CI/CD for Atlassian Ecosystem

Bamboo is developed by Atlassian, the same company behind Jira and Bitbucket. It is commonly used by organizations that already rely on Atlassian tools for development management.

Among enterprise CI/CD Tools, Bamboo is known for its smooth integration with Atlassian platforms.

Key features of Bamboo

Native integration with Jira and Bitbucket

Build automation and deployment pipelines

Parallel testing capabilities

Strong release management features

Why teams use Bamboo

Organizations using Atlassian ecosystems prefer Bamboo because it simplifies integration between development tracking and pipeline automation.

Although Bamboo is not as widely used as Jenkins, it still appears in many Best CI/CD Tools comparisons for enterprise environments.

7. TeamCity – Enterprise CI/CD from JetBrains

TeamCity is a CI/CD platform developed by JetBrains, known for its developer-focused tools.

It is commonly used in large software development environments where teams require advanced pipeline management.

Key features of TeamCity

Advanced pipeline configuration

Kotlin-based pipeline scripting

Detailed build analytics

Scalable build infrastructure

TeamCity also supports cloud integrations and container-based builds. Because of its advanced capabilities, it is often considered one of the Best CI/CD Tools for enterprise-scale development pipelines.

Beyond the primary pipeline platforms, several specialized Tools for CI and CD focus specifically on deployment and release automation.

These tools are often used alongside traditional CI platforms.

8. Argo CD – GitOps Deployment for Kubernetes

Argo CD is an open-source deployment tool designed specifically for Kubernetes environments. Instead of manual deployment processes, Argo CD follows the GitOps approach, where application configurations stored in Git repositories control deployments.

Key capabilities of Argo CD

Git-based deployment automation

Kubernetes-native architecture

Real-time deployment monitoring

Multi-cluster support

Argo CD has become one of the most widely adopted CI/CD Tools for Kubernetes-based infrastructure. Organizations using containerized architectures often rely on Argo CD to manage large-scale deployments.

9. Octopus Deploy – Deployment Automation Platform

Octopus Deploy focuses mainly on the release stage of pipelines. While traditional CI/CD Tools handle build and testing, Octopus automates application releases across multiple environments.

Key features of Octopus Deploy

Automated deployment pipelines

Release management capabilities

Multi-cloud and hybrid deployment support

Environment-specific configuration management

This makes Octopus one of the most useful Tools for CI and CD when managing complex enterprise deployments.

10. Spinnaker – Multi-Cloud Continuous Delivery Platform

Spinnaker is an open-source deployment platform designed for large-scale cloud environments. It was originally developed by Netflix to manage high-volume deployment pipelines.

Key capabilities of Spinnaker

Multi-cloud deployment support

Advanced release strategies

Continuous delivery pipelines

Canary deployment capabilities

Spinnaker integrates with cloud platforms such as:

AWS

Google Cloud

Microsoft Azure

It is commonly used together with Jenkins or other CI/CD Tools to manage production deployments.

Compare popular CI/CD platforms like Jenkins, GitHub Actions, GitLab CI/CD,

CircleCI, Azure DevOps, and TeamCity to choose the right automation tool for your DevOps pipeline.

With so many options available, selecting the right CI/CD Tools depends on your infrastructure, workflow, and team experience.

Here are some common recommendations used by development teams.

GitHub-based projects

If your code is hosted in GitHub repositories, GitHub Actions is often the easiest solution. It integrates directly with source control and simplifies pipeline automation.

Highly customizable pipelines

Organizations that require full control over pipelines often choose Jenkins. Its plugin ecosystem makes it one of the most flexible Best CI/CD Tools available.

All-in-one DevOps platform

Teams wanting a unified development platform often prefer GitLab CI/CD. It combines code management, pipelines, and security testing in one system.

Kubernetes environments

For container-based infrastructure, tools like Argo CD provide efficient deployment automation. Kubernetes compatibility has become a major factor when selecting modern CI/CD Tools

Software delivery has changed dramatically over the past decade. Manual deployments and slow release cycles can no longer support modern development needs. This is why CI/CD Tools have become a core part of DevOps workflows.

They automate the entire pipeline, from building applications to testing and deploying them into production. The numbers clearly show this shift.

Organizations that adopt the right CI/CD Tools gain several advantages:

Faster software releases

Higher code quality

Reduced human error

Scalable deployment workflows

Choosing the Best CI/CD Tools for your environment allows development teams to build reliable, automated pipelines that support modern cloud-native applications.

From training observations across development teams, organizations typically pilot CI/CD platforms on one application pipeline before scaling automation across multiple services or production environments.

Next Step: Learn CI/CD with Jenkins

If you want hands-on experience building automated pipelines, NovelVista’s CI/CD with Jenkins Certification Training is a great next step. The program covers Jenkins pipeline creation, CI/CD automation workflows, integration with Docker and Kubernetes, and real-world deployment scenarios. Through practical labs and expert guidance, the course helps professionals gain the skills needed to implement modern CI/CD pipelines in DevOps environments.

Author Details

Course Related To This blog

DevOps Leadership By PeopleCert

DevOps Practitioner + Agile Scrum Master

DevOps Leader

DevOps Test Engineering

DevOps Practitioner Certification

DevOps Master Certification

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.