Category | AI And ML

Last Updated On 12/09/2025

Have you ever wondered how models like ChatGPT or Gemini understand and generate text? The answer lies in tokens. What is a token in generative AI? Simply put, tokens are the smallest units of text that AI models process. These building blocks are the foundation of language comprehension and generation in AI systems. In 2025, as generative AI continues to evolve, understanding how tokens work is crucial for anyone involved in AI development or usage.

Tokens are like the puzzle pieces that form a complete picture of language. The efficiency, cost, and accuracy of AI models depend on how well these tokens are managed. This blog will break down what tokens are, how they work, and why they matter to both AI professionals and everyday users.

Tokens are the basic building blocks that AI models use to process and understand text. They can represent an entire word, a part of a word (subword), or even a single character. For instance, "hello" might be a single token, while a compound word like "football" could be split into two tokens—"foot" and "ball."

A simple analogy is that tokens are like puzzle pieces that form a larger picture. Just as puzzle pieces fit together to create an image, tokens combine to give AI models context and meaning to generate relevant outputs.

Discover the exact skills every professional must master

The vocabulary is the predefined set of all possible tokens a model has been trained on. Each token in the vocabulary corresponds to a unique representation that the AI model can understand and process.

Tokens don’t exist in isolation. AI models understand the relationship between tokens and their context within the text. This helps the AI interpret meaning, tone, and intent, ensuring that it generates responses that make sense based on the surrounding words.

The number of tokens directly impacts the performance and cost of using AI models. More tokens mean more processing time, higher costs, and potentially longer response lengths. In API services, users are charged based on the number of tokens processed.

Different AI models use unique tokenization methods. For example, two models may process the same sentence in different ways, leading to different outputs. Understanding the tokenization process is crucial for predicting model behavior and optimizing prompt engineering.

When we talk about generative AI for retail, tokens are the building blocks that models use to understand and generate content. They’re like puzzle pieces—each small piece combines to form the bigger picture, whether it’s text, images, or other data.

These represent individual words. For example, “AI is changing retail” becomes five tokens: “AI”, “is”, “changing”, “retail”, and punctuation or space tokens.

Long or rare words get split into smaller units. For instance, “personalization” may become “personal” + “##ization”. This helps models handle uncommon words efficiently.

Here, each letter, number, or symbol is a token. Useful for coding tasks or languages with complex scripts.

Tokens like [START], [END], or [PAD] don’t represent real content but guide the model during generation, marking beginnings, endings, or padding sequences.

For image or video generation, models break visuals into tokens, too. Think of an image as a grid of pixels or patches, where each patch becomes a “visual token.” Models like DALL·E or Stable Diffusion process these tokens to generate, modify, or understand images. Visual tokens are key when combining text and images for personalized ads, virtual try-ons, or product recommendations in retail.

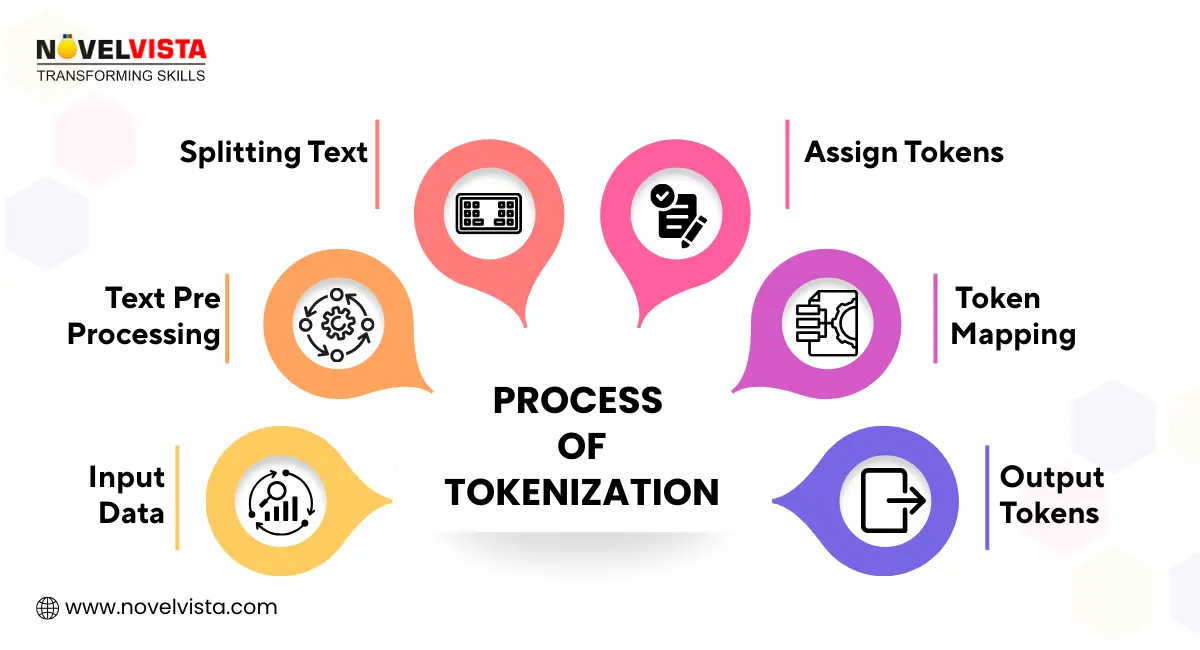

Here’s how Tokenization in AI works:

For example, the sentence "I love AI" would be split into tokens like ["I", "love", "AI"], each representing a specific unit the model can process.

Tokens play a pivotal role in AI model training. During the training process, the model learns the statistical relationships between tokens, allowing it to predict the next token based on patterns observed in large datasets. This is how AI models, like ChatGPT, learn to generate human-like responses.

In training, models also work with context windows, which are fixed token lengths they can process at once. For instance, a model might be trained to look at 4,000 tokens at a time when generating a response. This helps AI keep track of long-form conversations and maintain context.

Training at the token level is crucial because it allows models to generate text that feels natural and coherent, much like how a human might respond in a conversation.

ChatGPT uses tokens to balance context length, manage processing costs, and keep the conversation flowing smoothly. The model is able to keep track of prior tokens, ensuring that responses remain relevant and contextually accurate.

Gemini uses advanced tokenization methods like SentencePiece to process multiple languages efficiently. This allows the model to generate text in various languages without losing context or accuracy, thanks to its flexible token handling.

Perplexity AI uses tokenization to produce fast and context-rich responses in search-style queries. It optimizes token usage to maximize speed and precision, making it ideal for applications that require quick, relevant answers.

In API services, the number of tokens you use directly affects the cost. Writing concise prompts helps save tokens, time, and money, making it crucial for businesses to optimize token usage.

Efficiently written prompts use fewer tokens, which leads to faster responses and lower API usage costs. By focusing on clear and concise inputs, AI users can maximize the effectiveness of the model.

AI models have a limited number of tokens they can process at once, so staying within the token limits ensures that the AI can handle the full input without cutting off important details. For example, lengthy input without proper context management could lead to incomplete or irrelevant responses.

In languages like Chinese, Japanese, or Korean, fewer tokens are often needed to convey the same meaning compared to English. This is because these languages use characters that represent whole words or concepts, which can lead to more efficient tokenization.

While tokenization is a powerful tool for AI, it does come with some challenges:

Tokenization in AI is a balancing act; optimization of costs and efficiency must be weighed against the need for complete and accurate data processing.

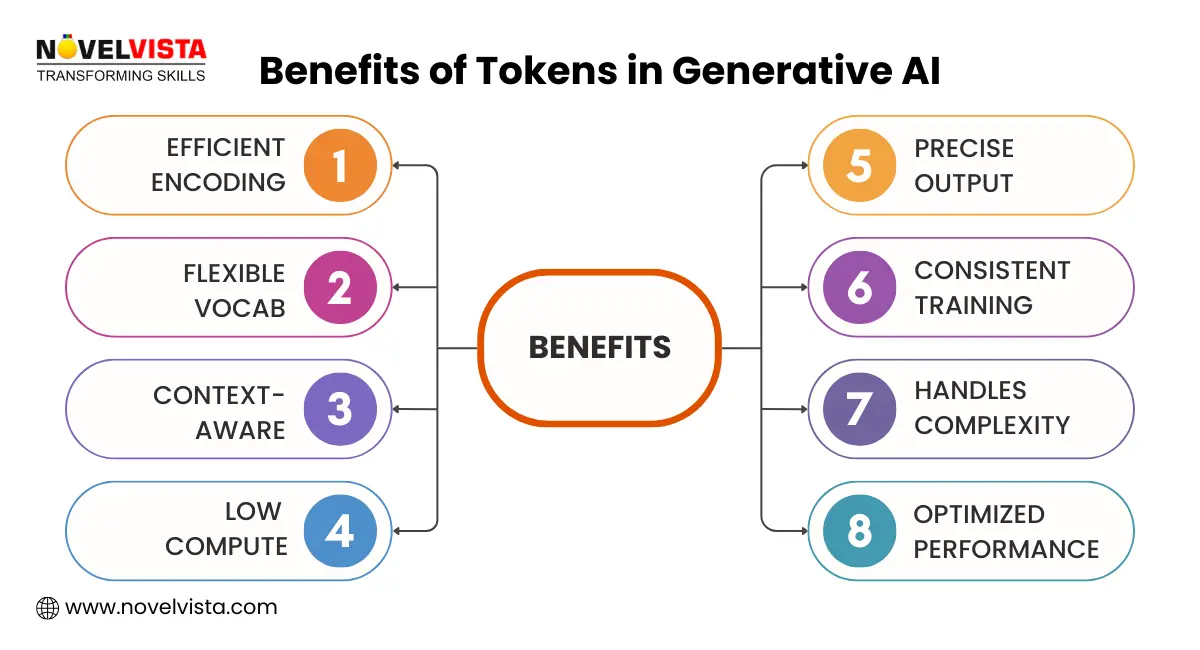

Tokens are essential for enabling generative AI models to:

In short, tokens are the backbone of AI-driven language generation, ensuring both accuracy and efficiency.

What is a token in generative AI? Tokens are the building blocks that enable AI models to understand, process, and generate meaningful text. Without tokens, large language models like ChatGPT and Google Gemini would struggle to comprehend context or produce coherent outputs. Industry practitioners, including AI researchers, data scientists, and NLP specialists, consistently emphasize the importance of token optimization. Their collective expertise underscores that tokens are not only technical units but strategic levers in designing scalable and reliable AI systems.

Whether for training, cost management, or model accuracy, tokens are the unsung heroes of AI, quietly driving the magic behind seamless human-computer interaction.

Tokens are the foundation of the AI revolution, and understanding them is key to staying ahead.

NovelVista’s Generative AI Professional Certification will help you master tokenization, embeddings, and prompt engineering. With hands-on training and real-world applications, this certification will future-proof your AI career.

Author Details

Course Related To This blog

Generative AI in Project Management

Generative AI in Retail

Generative AI in Marketing

Generative AI in Finance and Banking

Generative AI in Cybersecurity

Generative AI in Software Development

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.