Category | Quality Management

Last Updated On 23/01/2026

Artificial Intelligence is no longer experimental, it is embedded in how businesses make decisions, automate processes, and interact with customers. According to McKinsey, over 55% of organizations now use AI in at least one business function, yet nearly 60% of AI projects fail due to unmanaged risks, including bias, lack of transparency, and compliance gaps.

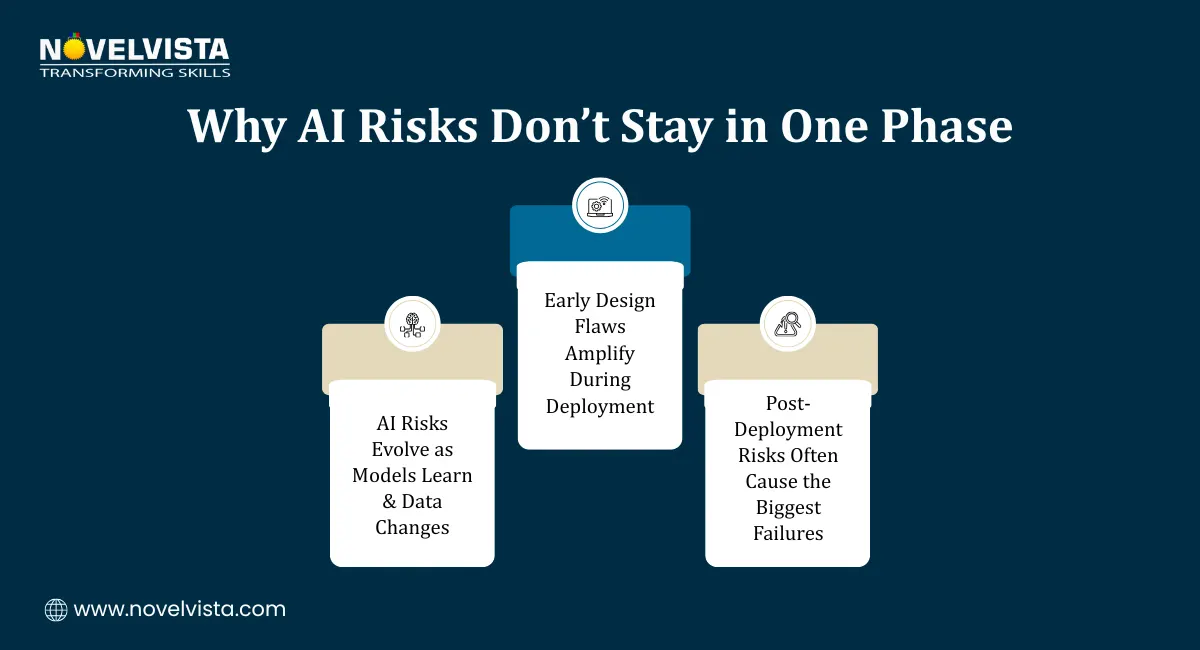

As AI systems grow more complex, organizations are facing a critical question: How do you manage AI risks responsibly across the entire system lifecycle?

This is where what is lifecycle risk management in ISO 42001 becomes highly relevant. Therefore understanding lifecycle risk management under ISO 42001 is no longer optional, it is essential.In this guide, we’ll break down what lifecycle risk management means, how the AI risk management lifecycle works, and how organizations can apply responsible AI risk controls effectively.

ISO 42001 is the world’s first international standard designed specifically for AI Management Systems (AIMS), offering organizations a structured and practical framework to govern, manage, and continuously improve how artificial intelligence is designed, deployed, and monitored. Unlike traditional ISO standards that primarily focus on quality management or information security, ISO 42001 directly addresses the unique challenges of AI governance, including ethical AI use, AI risk management, accountability and transparency, and the need for trustworthy AI operations.

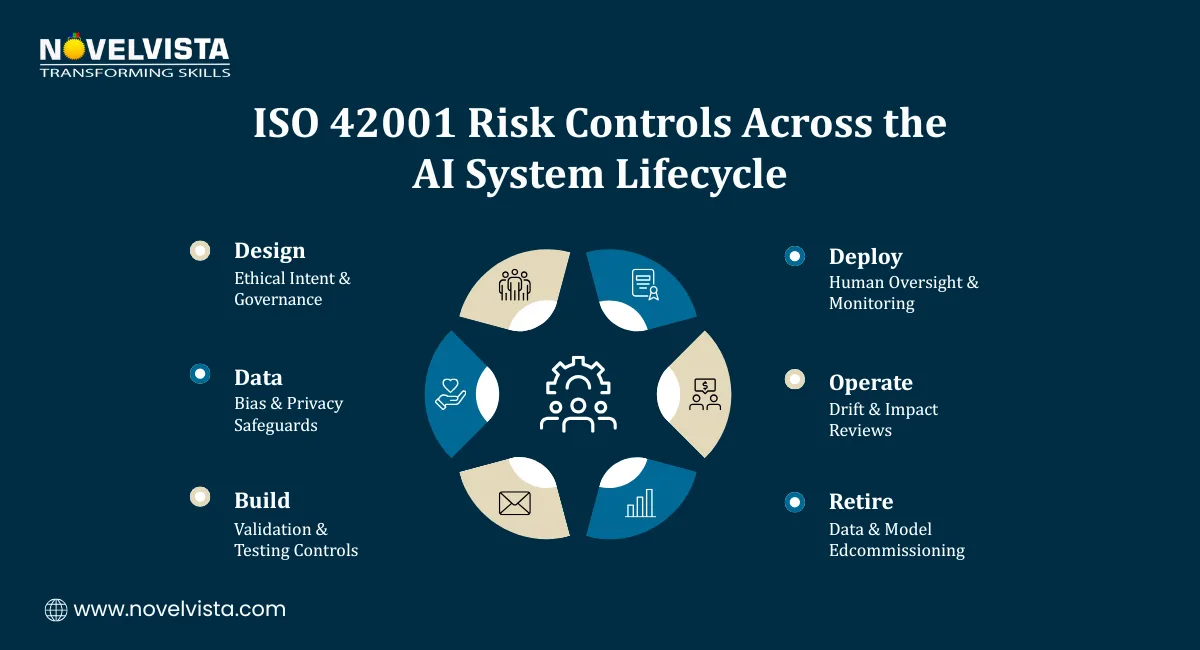

At its core, ISO 42001 ensures that AI systems remain safe, explainable, lawful, and aligned with organizational values, while promoting responsible innovation. Central to achieving these objectives is lifecycle risk management, which acts as the backbone by ensuring AI risks are identified, assessed, and controlled across the entire AI system lifecycle, not just at the point of deployment.

AI systems introduce risks that traditional IT systems do not. These include:

Algorithmic bias

Lack of explainability

Data privacy violations

Unintended decision-making outcomes

Without a lifecycle-based approach, organizations often end up addressing AI risks after incidents occur, which can result in regulatory penalties, reputational damage, and a significant loss of stakeholder trust. By embedding lifecycle risk management in ISO 42001 into overall AI governance, organizations shift from a reactive to a proactive risk posture. This approach enables early risk detection, strengthens compliance readiness, improves AI reliability and fairness, and builds stronger stakeholder confidence. In essence, lifecycle risk management transforms AI risk from an unpredictable liability into a controlled, continuously managed process that supports responsible and sustainable AI adoption.

It follows a continuous improvement model similar to Plan-Do-Check-Act (PDCA).

Risk Identification is the first and most critical step in lifecycle risk management, where organizations systematically identify risks related to data quality and bias, model behavior, ethical and legal impacts, and security vulnerabilities. By thoroughly examining these areas early in the AI system lifecycle, organizations ensure that potential issues are surfaced proactively, reducing the chances of hidden risks going unnoticed and escalating later.

Risk Analysis and Evaluation involves assessing each identified risk based on its likelihood, severity of impact, and regulatory and ethical implications. This structured evaluation allows organizations to prioritize high-risk AI use cases, ensuring that the most critical risks are addressed first and managed effectively throughout the AI system lifecycle.

Risk Treatment is the process where organizations implement appropriate measures to mitigate identified risks, including technical safeguards, human-in-the-loop oversight, and policy and governance controls. These actions align directly with responsible AI risk controls, ensuring that AI systems operate safely, ethically, and in compliance with ISO 42001 standards.

Understand AI risks across the full lifecycle

Learn ISO 42001–aligned risk practices

Support responsible AI governance and audits

It is tightly integrated with risk management under ISO 42001.

It focuses on identifying and mitigating risks early in the AI system lifecycle. Key risks addressed at this stage include poor data selection, unethical design choices, and lack of transparency. Implementing robust risk controls during design and development helps prevent issues before deployment, ensuring AI systems are safe, ethical, and aligned with organizational standards.

Data Management Risks are critical because data forms the foundation of AI systems. It emphasizes data governance, bias mitigation, and privacy and consent management to ensure that data is accurate, ethical, and compliant. Implementing effective data controls at this stage significantly reduces long-term AI risk exposure and supports responsible AI deployment.

Once deployed, AI systems may:

Drift from expected behavior

Produce biased outcomes

Face cybersecurity threats

Lifecycle monitoring ensures ongoing compliance and performance.

It places strong emphasis on responsible AI risk controls, ensuring AI systems remain ethical and trustworthy.

Key controls include:

These controls ensure AI systems align with both regulatory expectations and societal values.

Implementing lifecycle risk management requires more than policies, it requires cultural alignment.

Successful organizations focus on:

Leadership commitment to responsible AI

Cross-functional collaboration between AI, legal, and risk teams

Clear documentation and audits aligned with ISO 42001

Continuous learning and improvement

When done right, lifecycle risk management becomes a strategic advantage, not a compliance burden.

So, what is lifecycle risk management in ISO 42001?

It is a comprehensive, continuous approach to managing AI risks across every stage of the AI system lifecycle, ensuring safety, compliance, and trust.

By integrating the AI risk management lifecycle, strengthening AI system lifecycle management, and applying responsible AI risk controls, organizations can confidently deploy AI while meeting ethical and regulatory expectations.

In a world where AI trust defines business success, lifecycle risk management is no longer optional, it is essential. This foundation also sets the stage for an effective ISO 42001 Exam Strategy Guide, helping professionals translate lifecycle risk management concepts into exam and audit success.

Ready to take your AI governance expertise to the next level?

Strengthen your understanding of what is lifecycle risk management in ISO 42001 by enrolling in NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training. This course is designed to equip professionals with practical auditing skills, real-world AI governance insights, and globally recognized credentials. Ideal for AI leaders, risk professionals, compliance teams, and auditors, it empowers you to confidently assess AI Management Systems, apply responsible AI risk controls, and lead ISO 42001 audits with authority.

Start your ISO 42001 Lead Auditor journey today and become a trusted expert in responsible AI governance.

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.