Category | Quality Management

Last Updated On 25/03/2026

AI systems don’t fail loudly.They fail quietly, through biased outputs, unclear decisions, security gaps, or models behaving differently after deployment. And by the time someone notices, trust is already damaged.

This is where ISO 42001 Risk Management steps in.

ISO 42001 Risk Management forms the backbone of an AI Management System (AIMS). It helps organizations identify, assess, and control risks across the entire AI lifecycle—before those risks turn into legal, ethical, or reputational problems.

In this guide, you’ll get a clear and practical view of:

No heavy theory. Just real clarity on how AI risk management works in practice.

Risk Management is not about stopping innovation. It’s about making sure AI behaves as expected, stays within ethical boundaries, and aligns with laws and business goals.

At its core, Risk Management covers risks across the full AI lifecycle, including:

It focuses on a structured, auditable approach where risks are:

What makes Risk Management different is its strong link to responsible AI principles like fairness, transparency, accountability, and human oversight. These are not optional ideas; they are built into how risks are evaluated and controlled.

This creates a shared language for developers, managers, compliance teams, and auditors to talk about AI risk without confusion.

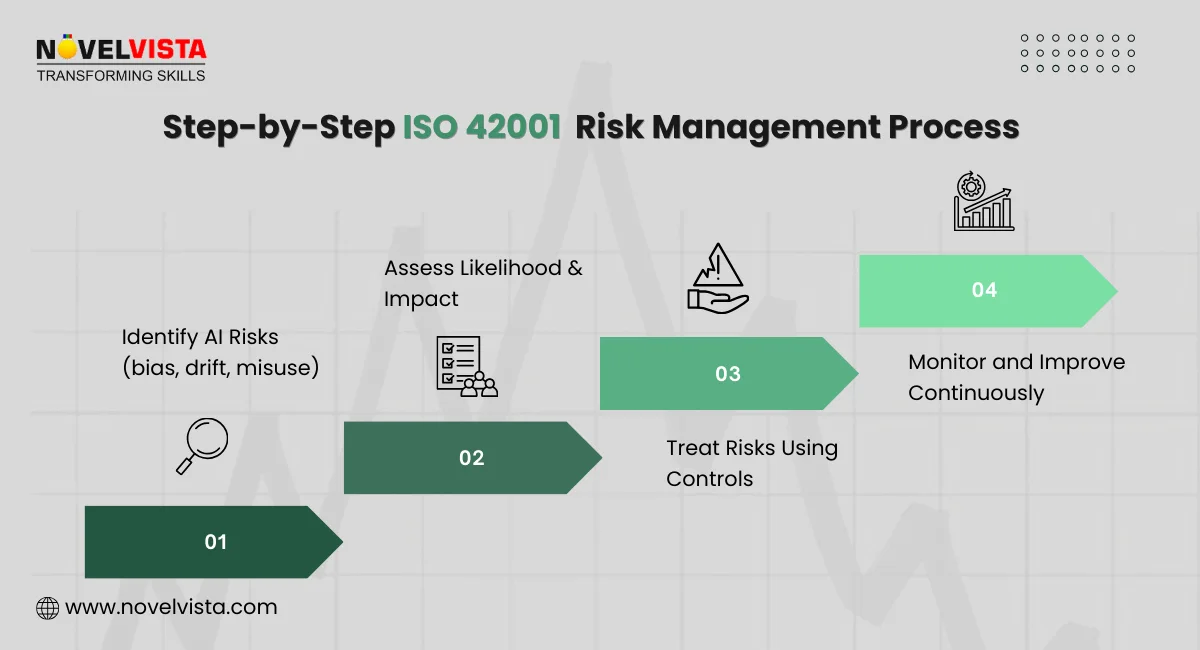

The Risk Management framework is designed to be simple, repeatable, and auditable. It doesn’t rely on guesswork or one-time reviews.

Here’s how the framework works in practice:

Organizations identify AI-specific risks linked to:

This step ensures no major risk is ignored just because it feels technical or complex.

Each identified risk is evaluated based on:

This helps teams focus on what truly matters instead of treating all risks the same.

Controls are selected to reduce, manage, or accept risks using ISO 42001 guidance and Annex A controls. Ownership is clearly assigned.

Risks don’t stay static. Risk Management requires ongoing monitoring to catch drift, misuse, or new threats over time.

Annex A plays a key role here by supporting:

The framework steps outlined here mirror the approach used in ISO-aligned AI risk workshops and audit simulations we conduct. These steps are designed to be repeatable, auditable, and practical for organizations managing live AI systems across multiple environments.

To strengthen your understanding of AI risk governance, it also helps to look beyond ISO 42001. The ISO 31000 risk management framework provides a broader, organization-wide approach to identifying, assessing, and treating risks. When combined, ISO 31000 complements ISO 42001 by giving lead auditors a solid foundation for consistent and mature risk decision-making across AI and non-AI domains.Understand ISO 42001 clauses, Annex A controls, and AI governance essentials at a glance.

Build confidence in audits and AI compliance without confusion.

Risk Management becomes most powerful when applied step by step. This structured process is what auditors and regulators expect to see.

Not every AI risk is theoretical. Many are already showing up in real systems.

Common risks identified under ISO 42001 Risk Management include:

These risks are mapped directly to:

This ensures the risk register reflects real operational exposure, not abstract concerns.

Once risks are identified, Risk Management requires a structured assessment.

For high-risk AI systems, organizations conduct AI Impact Assessments (AIIA). These help evaluate:

Risks are typically scored using:

From an audit perspective, documentation matters here. Auditors expect:

This step turns AI risk management into something measurable and defensible.

After assessment, risks must be treated, not ignored.

Risk Management allows several treatment options:

Typical control measures include:

From a training and audit perspective, effective risk treatment is where most organizations struggle. We consistently see stronger audit outcomes when controls are clearly owned, mapped to Annex A, and supported by operational evidence rather than policy statements alone.

ISO 42001 Risk Management doesn’t stop at policies. It extends into daily operations.

Risk controls are embedded directly into:

Key operational practices include:

Many organizations also align Risk Management with:

This creates an integrated approach where AI risk, security risk, and compliance work together instead of in silos.

Risk Management is not a one-time checklist. It works as a living system, and the PDCA cycle is what keeps it active, relevant, and trustworthy as AI systems evolve.

Here’s how it works in real terms.

At this stage, organizations define the AI context, understand where AI is used, and identify risks that could affect people, business outcomes, or compliance. This includes leadership commitment, defining risk appetite, and planning controls for AI systems that truly matter.

This is where policies turn into action. Risk controls are applied across data collection, model training, testing, deployment, and usage. Teams implement human oversight, testing routines, approval workflows, and clear accountability for AI decisions.

Organizations review AI risk performance using audits, KPIs, logs, and monitoring results. Lead auditors verify whether risks are being managed as planned and whether controls are actually reducing real-world impact, not just ticking boxes.

Based on findings, organizations adjust controls, retrain models, update risk registers, and strengthen governance. This keeps Risk Management aligned with new threats, regulations, and business changes.

The PDCA cycle explained here reflects how ISO management system standards are evaluated globally. Lead auditors are trained to look for this continuous improvement loop as evidence that AI risk management is active, evolving, and aligned with changing regulations and technologies.

For lead auditors, Risk Management changes how audits are planned and executed. It goes beyond checking documents and looks deeply into how AI risks are handled in practice.

Key responsibilities include:

Strong Risk Management gives auditors confidence that AI governance is real, not just written.

When implemented well, Risk Management delivers more than compliance. It supports business trust, operational stability, and long-term AI success.

Clear accountability for AI decisions: Roles, responsibilities, and escalation paths are defined, making it easier to explain why AI systems behave the way they do.

Better readiness for regulations like the EU AI Act: Organizations with structured AI risk controls are far better prepared for regulatory reviews and external scrutiny.

Reduced ethical, legal, and security exposure: Risks such as bias, misuse, and unintended harm are identified early and controlled before they cause damage.

Stronger customer and stakeholder trust: Transparent risk management builds confidence among customers, partners, and regulators who use or are affected by AI systems.

These benefits make Risk Management a business enabler, not just a compliance tool.

Organizations often ask what makes implementation successful. The answer lies in practical choices, not complex tools.

Looking for a practical way to apply ISO 42001 requirements?

Explore our detailed blog on the ISO 42001 checklist, covering key controls and clear implementation steps to help you validate readiness and strengthen your AI governance approach.

Even mature organizations face challenges when managing AI risks.

Rapidly evolving AI threats: New attack methods, data issues, and misuse patterns emerge quickly, requiring frequent updates to risk controls.

Limited skills and resources: AI risk management needs cross-functional expertise, which can be hard to build or maintain.

Industry-specific risk differences: Healthcare, finance, and public services face very different AI risks, making generic controls ineffective.

The challenges listed here are drawn from repeated discussions with organizations preparing for ISO 42001 audits. This is why trained lead auditors play a critical role in translating complex AI risks into controls that remain practical, auditable, and effective.

ISO 42001 Risk Management provides a clear, structured way to manage AI risks across the full lifecycle. It helps organizations build ethical, transparent, and auditable AI systems while giving lead auditors a solid framework to assess real-world controls. As AI continues to scale, this approach becomes essential for long-term trust and compliance.

This content is grounded in internationally recognized standards, structured audit practices, and real AI risk scenarios used in professional training environments. The intent is to provide guidance that organizations and auditors can confidently apply in real assessments.

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.