Category | Quality Management

Last Updated On 13/04/2026

Artificial Intelligence is no longer just a competitive advantage it’s a business necessity. Today, more than 80% of organizations are actively investing in or deploying AI-driven systems to enhance efficiency, accelerate decision-making, and deliver better customer experiences. But as AI adoption accelerates, so does a critical challenge: how do you effectively control and manage the risks that come with it?

Behind every powerful AI system lies a layer of hidden vulnerabilities biased algorithms influencing decisions, model drift leading to inaccurate outputs, data privacy concerns, and growing regulatory pressure. These aren’t just technical issues; they’re business risks that can impact trust, compliance, and long-term success.

This is where ISO/IEC 42001 steps in as a game-changer. As the world’s first AI management system standard, it provides a structured framework to govern AI responsibly. At the core of this framework is AI risk treatment requirements clause 6.1.3, which goes beyond risk identification and focuses on how organizations actively treat, control, and reduce AI risks in a systematic way.

Whether you’re developing AI models, managing AI systems, or ensuring compliance, understanding AI risk treatment requirements clause 6.1.3 is no longer optional it’s essential for building trustworthy, resilient, and compliant AI.

Let’s break it down and see how it works in practice.

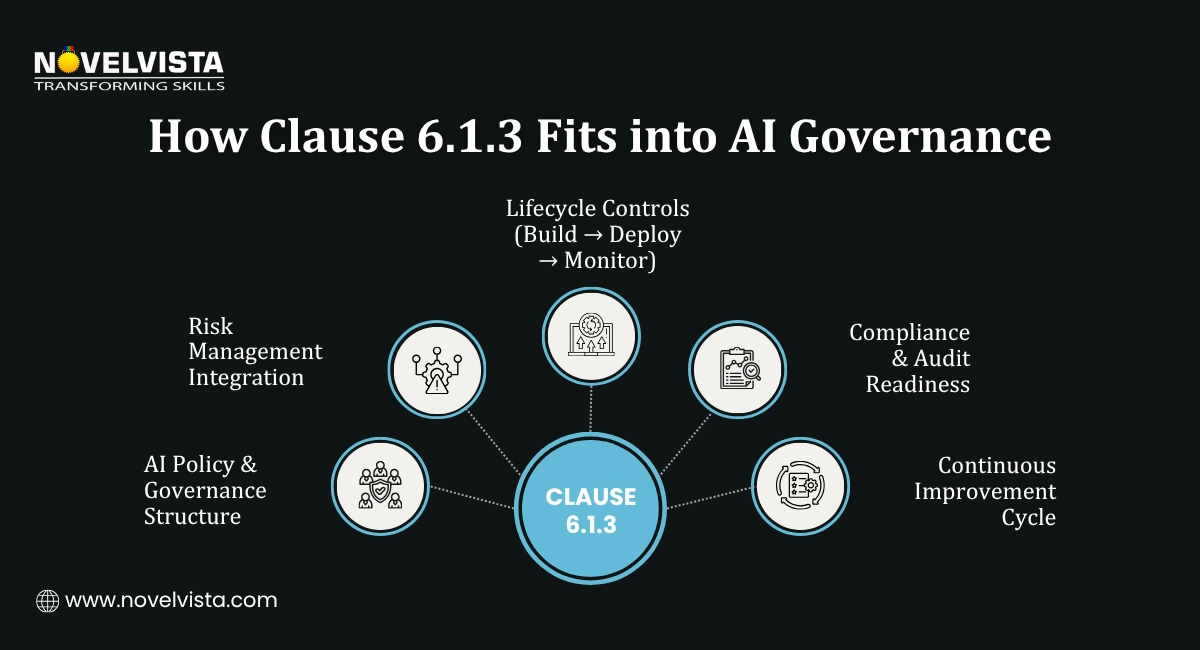

Within ISO/IEC 42001, Clause 6 focuses on planning, particularly how organizations address risks and opportunities in AI systems.

AI risk treatment requirements clause 6.1.3 specifically deals with:

How identified AI risks should be treated, controlled, and monitored.

It requires organizations to:

In short, Clause 6.1.3 ensures that risk management is not theoretical it’s actionable and measurable.

AI risks are fundamentally different from traditional IT risks. They evolve over time, depend on data quality, and can produce unpredictable outcomes.

That’s why AI risk treatment requirements clause 6.1.3 is critical in ISO/IEC 42001.

Organizations must demonstrate ethical and fair AI usage.

Aligns with global AI regulations and governance expectations.

Helps prevent system failures, incorrect outputs, and business disruptions.

Transparent risk handling improves customer and partner confidence.

Without structured AI risk mitigation measures, even advanced AI systems can become liabilities.

To comply with AI risk treatment requirements clause 6.1.3, organizations must implement a systematic process.

Define how each identified risk will be addressed through a formal AI risk treatment plan.

Organizations can:

Apply appropriate artificial intelligence risk controls such as:

Maintain records of:

Continuously evaluate whether the implemented measures are effective in achieving AI system risk reduction.

Ensure alignment between selected controls and Annex A by referencing the Statement of Applicability (SoA), which documents which controls are applied, justified, or excluded—providing transparency and audit readiness for your AI risk treatment plan.

A well-defined AI risk treatment plan is central to Clause 6.1.3 compliance.

Ensure the plan supports your AI Management System goals.

Each identified risk should have a corresponding control or mitigation strategy.

Clearly define roles and accountability.

Set deadlines for implementing risk treatments.

Track KPIs to evaluate AI system risk reduction.

This structured approach ensures consistency and audit readiness under ISO/IEC 42001.

Pro Tip: Use this ISO 42001 Exam Strategy Guide to plan your preparation, focus on key clauses, and boost your chances of passing the certification on your first attempt.

Effective AI risk mitigation measures are essential for managing evolving AI-specific risks—especially as AI systems become more autonomous, generative, and deeply integrated into business operations in 2026.

These modern AI risk mitigation measures align with AI risk treatment requirements clause 6.1.3 and support continuous AI system risk reduction in increasingly complex AI environments.

These measures directly support AI risk treatment requirements clause 6.1.3 and strengthen governance.

Implementing artificial intelligence risk controls requires integrating them into the AI lifecycle.

1. Bias Detection Tools

Identify and reduce discrimination in AI outputs.

2. Explainability Mechanisms

Ensure AI decisions can be understood and justified.

3. Monitoring Systems

Track performance and detect anomalies.

4. Access and Security Controls

Prevent unauthorized access and data breaches.

When properly implemented, these controls drive effective AI system risk reduction.

ISO/IEC 42001 emphasizes continuous improvement, making AI system risk reduction an ongoing process.

Regularly update and clean datasets.

Test models against real-world scenarios.

Ensure ethical AI outcomes.

Protect against evolving cyber threats.

Incorporate user and system feedback for improvement.

These strategies ensure long-term compliance with AI risk treatment requirements clause 6.1.3. How ISO 42001 Addresses Generative AI and Privacy Risks by providing structured governance, risk management frameworks, and robust controls to ensure secure, ethical, and compliant AI deployment.

Organizations often face hurdles when applying AI risk treatment requirements clause 6.1.3.

Overcoming these challenges requires both technical knowledge and organizational alignment.

To effectively implement AI risk treatment requirements clause 6.1.3, follow these best practices:

Make risk treatment part of the AI lifecycle.

Ensure audit readiness.

Improve monitoring and reporting efficiency.

Involve technical, legal, and business teams.

Adapt to changing AI risks and regulations.

As AI adoption accelerates across industries, the real differentiator is no longer just innovation it’s how responsibly that innovation is governed. This is where AI risk treatment requirements clause 6.1.3 in ISO/IEC 42001 becomes indispensable, offering a structured and actionable roadmap to move from risk awareness to real risk control.

Organizations that invest in a well-defined AI risk treatment plan, implement robust artificial intelligence risk controls, and commit to continuous AI system risk reduction are not just managing risks they’re building resilient, future-ready AI ecosystems. These efforts translate into stronger compliance, improved decision-making, and, most importantly, greater stakeholder trust.

In a landscape where AI risks can directly impact reputation, operations, and regulatory standing, taking a proactive approach is no longer optional. AI risk treatment requirements clause 6.1.3 empowers organizations to stay ahead turning uncertainty into control and complexity into clarity.

Because in the age of AI, true success isn’t measured by how advanced your systems are but by how safe, ethical, and trustworthy they remain over time.

Ready to take your AI governance expertise to the next level?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain practical auditing skills, real-world insights into AI risk management, and globally recognized credentials. Designed for AI professionals, compliance leaders, and IT decision-makers, this course equips you to confidently assess AI risk treatment requirements clause 6.1.3, evaluate AI risk treatment plans, and implement effective artificial intelligence risk controls aligned with ISO/IEC 42001.

Start your ISO/IEC 42001 Lead Auditor journey today!

Author Details

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.