Category | Quality Management

Last Updated On 05/03/2026

A lot of organizations are already using generative AI. Very few can clearly explain who approves it, what data it learns from, or how its risks are controlled. That gap is exactly where questions start coming up from regulators, customers, and internal leadership. How does ISO 42001 address generative AI risks? By putting structure around decisions that are often made too fast and without oversight.

This article explains how ISO 42001 manages generative AI risks and privacy risks in AI using clear clauses, Annex A controls, and risk-based governance that works across the full AI lifecycle.

Area |

How ISO 42001 Handles It |

Generative AI risks |

Identified, assessed, treated, and monitored |

Privacy risks |

Built into design, training, and deployment |

Governance |

AI Management System (AIMS) |

Controls |

Annex A AI-specific safeguards |

Outcome |

Accountable, auditable AI use |

Generative AI brings speed and scale, but it also introduces risks that traditional IT controls were never designed to handle.

Common generative AI risks include:

At the same time, privacy risks are increasing. AI systems often rely on:

This combination makes governance essential. Without it, organizations struggle to answer simple questions like who owns AI risks or how privacy is protected.

So, how does ISO 42001 address generative AI risks? It introduces a formal AI Management System (AIMS) that governs AI use from design to retirement, instead of relying on isolated policies.

Apply fairness, transparency, accountability, privacy, and human oversight controls using a practical ISO 42001 checklist that helps teams implement ethical AI with audit-ready confidence.

ISO/IEC 42001 is the first international standard focused entirely on AI management. It defines how organizations establish, implement, maintain, and improve an AI Management System (AIMS).

The structure follows familiar clauses (4 to 10), aligned with other ISO management standards:

What makes ISO 42001 practical is Annex A, which contains 37 AI-specific controls. These controls cover:

Together, the clauses and Annex A controls create a system that can manage both innovation and accountability. This is the baseline that allows organizations to answer how ISO 42001 address privacy risks in AI? in a consistent and auditable way.

To understand the scope, eligibility, and career value, explore our detailed guide on What Is ISO 42001 Lead Auditor Certification.

ISO 42001 does not treat generative AI as “just another tool.” It requires risks to be understood in the context of each AI use case.

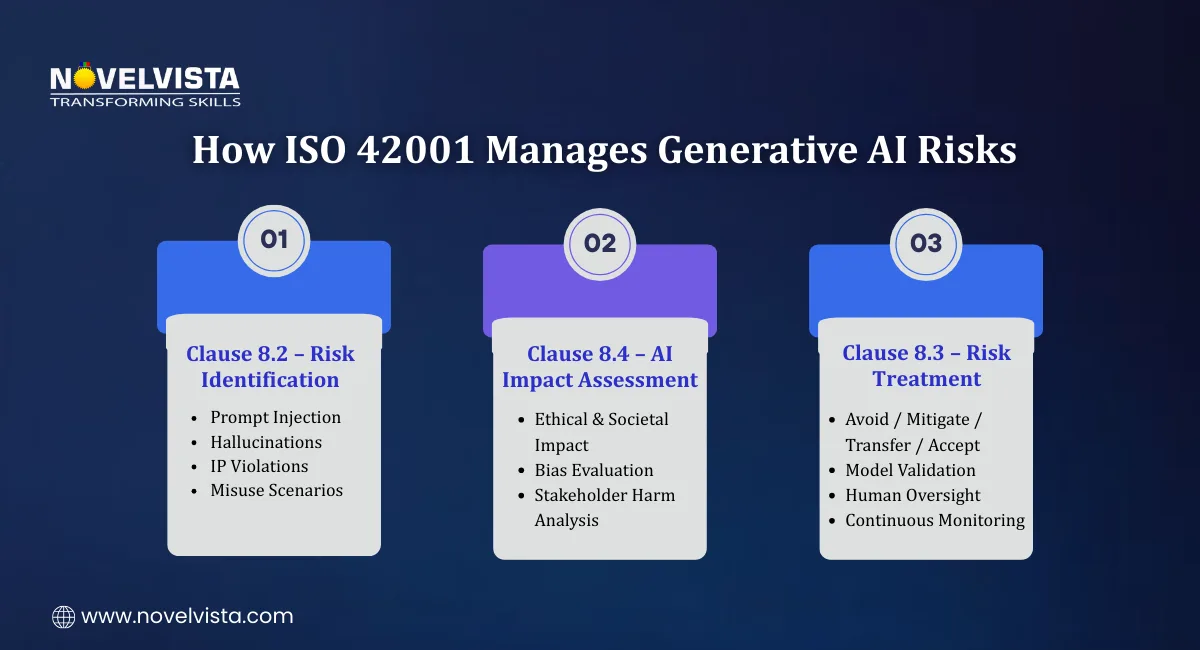

Clause 8.2 requires organizations to identify and assess AI-specific risks before and during use.

For generative AI, this includes risks such as:

Risks must be assessed based on:

This structured assessment is a direct answer to how does ISO 42001 address generative AI risks? It ensures risks are identified early, not after something goes wrong.

Clause 8.4 introduces AI impact assessments, similar in intent to DPIAs but broader in scope.

These assessments evaluate:

This step forces organizations to look beyond technical accuracy. It addresses questions regulators increasingly ask, including how does ISO 42001 address privacy risks in AI? and how AI decisions affect people.

Once risks are identified, Clause 8.3 requires organizations to treat them using clear strategies:

For generative AI, controls often focus on:

Continuous monitoring is required because models evolve over time. This ensures new risks are detected as AI systems learn or are updated.

Together, Clauses 8.2, 8.3, and 8.4 show clearly how does ISO 42001 address generative AI risks? through structured, repeatable governance.

Privacy risks in AI systems are often harder to detect than technical failures. Data may be reused silently. Third-party models may process personal data without clear visibility. Sensitive information may enter prompts unintentionally.

So the question becomes clear: how does ISO 42001 address privacy risks in AI?

The answer lies in lifecycle governance.

ISO 42001 requires privacy considerations to be embedded into:

Organizations must control:

This structured approach ensures privacy is not an afterthought. It is built into decision-making from the start.

This is another clear example of how does ISO 42001 address privacy risks in AI? by integrating privacy directly into operational controls.

Privacy risks are assessed as part of broader AI risk and impact assessments.

ISO 42001 supports alignment with regulations such as:

Organizations must identify and mitigate risks like:

This helps answer both governance questions:

It does so by linking technical controls with regulatory expectations.

Annex A strengthens privacy protection with practical controls such as:

These controls ensure privacy safeguards apply consistently across internal AI development and externally sourced models.

Annex A plays a central role in turning risk assessments into enforceable controls.

Some of the most relevant areas include:

These controls collectively show how does ISO 42001 address generative AI risks? in a structured and practical way.

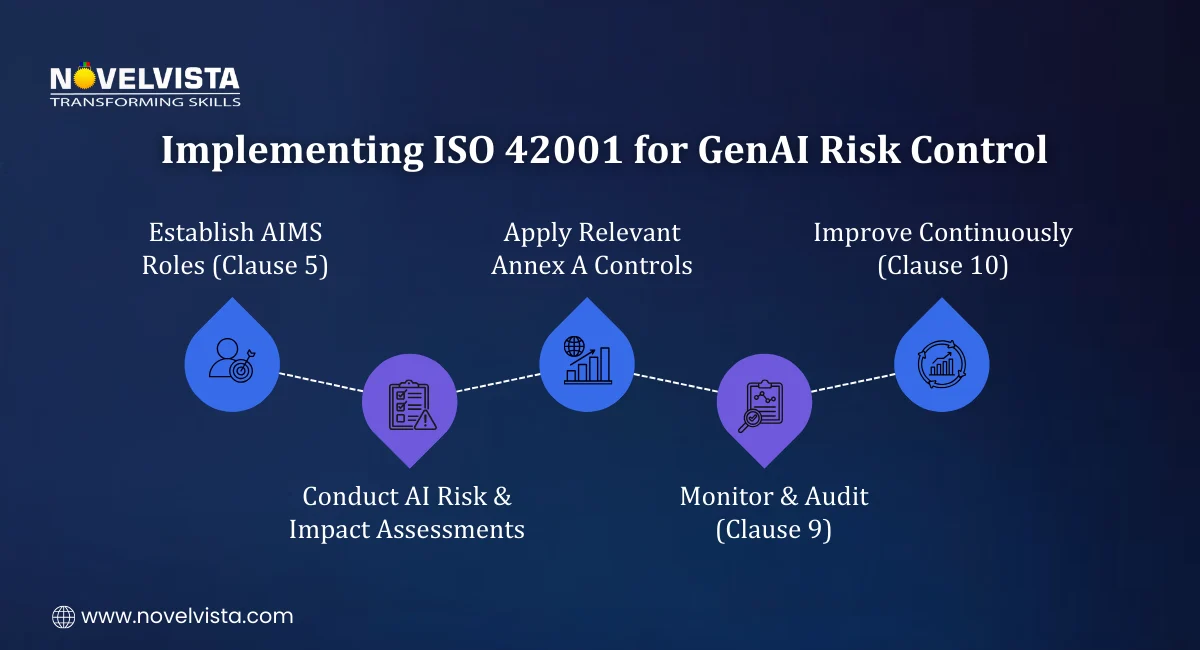

Successful implementation requires structure, not just policies.

A practical sequence looks like this:

Many organizations also align ISO 42001 with ISO 31000 or NIST RMF for broader risk management consistency.

For a practical, step-by-step view of controls and compliance, read our guide ISO 42001 Checklist 2026: Key Controls, Implementation Steps & Compliance Requirements.

Organizations that adopt ISO 42001 experience measurable governance improvements.

Key benefits include:

Instead of reactive fixes, companies move toward proactive oversight.

That shift is the strongest answer to how does ISO 42001 address generative AI risks? It creates a structured system rather than isolated controls.

Generative AI and privacy risks cannot be managed through informal guidelines or scattered policies.

ISO 42001 provides a structured, auditable framework that governs AI responsibly from design to deployment and beyond. By addressing both generative AI risks and privacy risks in AI, it enables organizations to innovate with confidence while maintaining accountability, transparency, and trust.

Governance does not slow innovation; it makes it sustainable.

If you want to assess and lead AI governance initiatives with confidence, NovelVista’s ISO 42001 Lead Auditor Certification provides structured, practical training aligned with ISO/IEC 42001:2023. The course covers AI risk evaluation, Annex A controls, audit techniques, and governance best practices. It is designed for professionals who want to ensure responsible AI implementation while building strong compliance and assurance capabilities

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.