Category | Quality Management

Last Updated On 24/04/2026

Artificial Intelligence has rapidly evolved from a competitive advantage to a business necessity, influencing critical decisions across industries like healthcare, finance, and retail. According to McKinsey & Company’s 2025 State of AI survey, 88% of organizations now use AI in at least one business function, signaling a clear shift from experimentation to real-world operational deployment.

However, this widespread adoption tells only half the story. A 2025 CEO study by IBM reveals that only around 25% of AI initiatives have delivered their expected return on investment, often due to gaps in governance, risk management, and data quality. This growing disconnect between adoption and outcomes highlights a critical challenge: organizations are scaling AI faster than they are managing its risks.

As AI systems become more embedded in decision-making, the consequences of unmanaged risks ranging from bias and lack of transparency to security and compliance failures become significantly more impactful. This makes structured risk assessment not just important, but essential for building AI systems that are both innovative and trustworthy.

In this blog, we will answer these questions and unpack how Clause 8.2 of ISO 42001 risk assessment enables organizations to move from reactive risk handling to proactive, structured AI governance making AI not just powerful, but responsible.

At its core, Clause 8.2 of ISO 42001 risk assessment focuses on identifying and evaluating risks associated with AI systems.

It requires organizations to:

This clause is a key part of the operational planning process within ISO 42001. It ensures that risk management is not an afterthought but an integral part of the AI lifecycle.

While Clause 6.1.2 defines the requirement for establishing a risk assessment process, Clause 8.2 represents the operational execution of the “doing” phase where those assessments are actively applied to live AI system lifecycles.

Unlike traditional IT risk frameworks, this clause emphasizes AI-specific risks, including ethical implications, data bias, and unintended consequences.

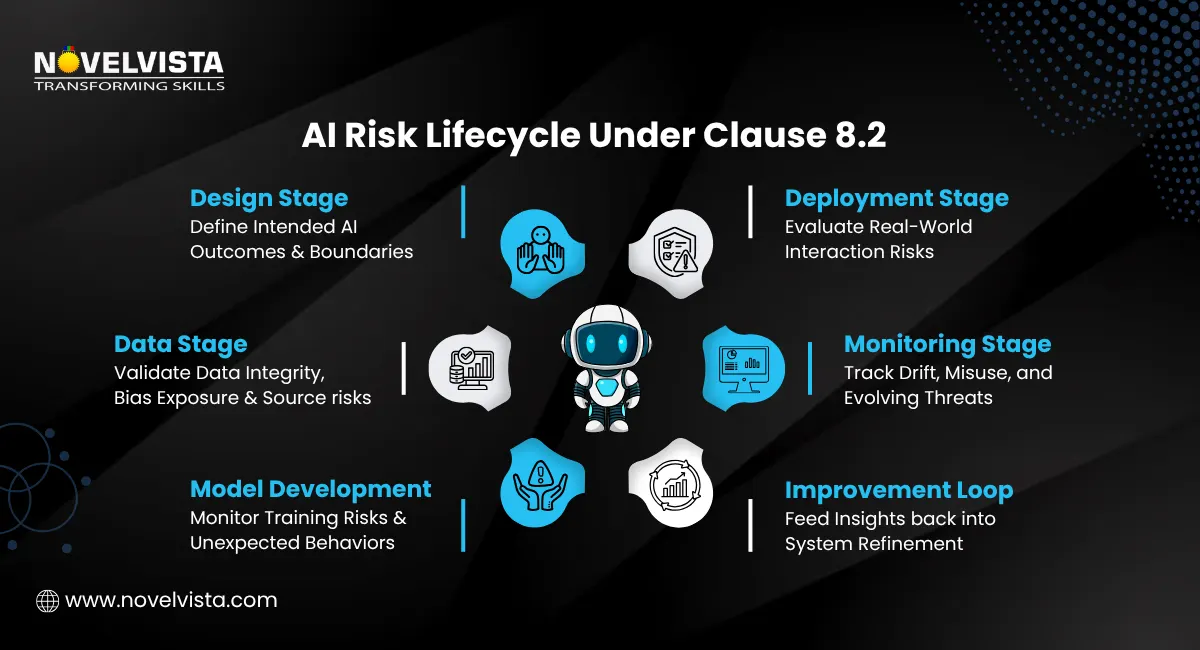

The first step in Clause 8.2 of ISO 42001 risk assessment is identifying risks across the AI system lifecycle.

This involves AI system risk identification, where organizations examine:

Common risks include:

Effective AI system risk identification ensures that no critical risk goes unnoticed.

Once risks are identified, the next step is conducting a thorough AI risk assessment.

This process typically includes:

For example, a biased AI hiring tool may have a high impact and high likelihood, making it a top priority.

The goal of this stage in Clause 8.2 of ISO 42001 risk assessment is to create a clear risk profile that guides decision-making.

Clause 8.2 of ISO 42001 risk assessment is not limited to identifying and analyzing risks it also extends into Risk Treatment, which is where real action happens. Once risks are evaluated, organizations must decide how to handle them in a structured and accountable way.

There are four primary approaches to risk treatment:

A critical requirement here is documentation. Every risk treatment decision must be clearly recorded, justified, and traceable. This is not optional it is a mandatory audit requirement under ISO 42001 and plays a key role in demonstrating compliance, accountability, and governance maturity.

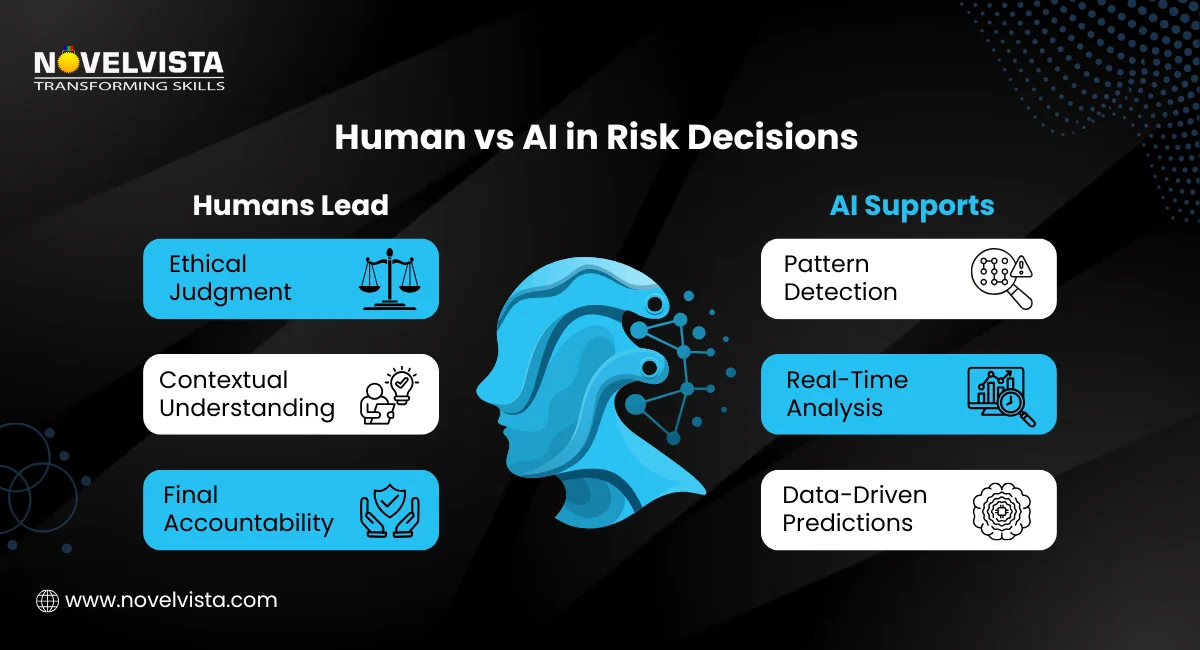

A critical aspect of this clause is the AI impact assessment, which goes beyond technical risks.

It considers:

For instance, an AI system used in healthcare must be evaluated not just for accuracy but also for fairness and patient safety. In one healthcare‑AI project, applying Clause 8.2‑style risk assessment early helped us flag data‑representativeness issues before deployment, reducing rework by 3–4 months.

By incorporating AI impact assessment, organizations can align their AI systems with broader societal expectations.

The final component is artificial intelligence risk analysis, which involves deeper evaluation and continuous monitoring.

This includes:

Unlike static systems, AI models learn and adapt. This makes artificial intelligence risk analysis an ongoing process rather than a one-time activity.

Implementing Clause 8.2 of ISO 42001 risk assessment offers several tangible benefits.

Organizations gain a clear understanding of risks, enabling better strategic decisions.

With increasing global regulations on AI, structured AI risk assessment helps ensure compliance.

Customers and stakeholders are more likely to trust AI systems that are transparent and well-governed.

Early identification and evaluation reduce the likelihood of costly failures.

In short, it is not just about compliance it’s about building responsible AI systems. To build a strong foundation for AI governance, organizations must align Clause 5.2 of ISO/IEC 42001 with Clause 8.2 of ISO 42001 risk assessment, ensuring that leadership commitment and policy direction effectively support structured AI risk management practices.

Implementing Clause 8.2 of ISO 42001 risk assessment does not have to be overwhelming. Here’s a step-by-step approach:

Pro-Tip: Don’t treat AI risk assessment as a one-time checklist activity. The most effective organizations embed it into their continuous development lifecycle (CI/CD) ensuring risks are reassessed every time models are updated, retrained, or deployed in new environments.

Identify which AI systems and processes fall under the assessment.

List all potential risks across the lifecycle.

Evaluate likelihood and impact using a structured framework.

Analyze ethical, legal, and social implications.

Use data and monitoring tools to validate and refine assessments.

Maintain records and update assessments regularly.

While Clause 8.2 of ISO 42001 risk assessment provides a clear framework, organizations often face challenges.

AI risk management requires specialized knowledge.

Solution: Invest in training and certifications.

AI systems evolve, making risk assessment complex.

Solution: Implement continuous monitoring.

Poor data leads to inaccurate risk evaluation.

Solution: Establish strong data governance practices.

Over-regulation can slow down innovation.

Solution: Adopt a risk-based approach rather than rigid controls.

To successfully apply concepts like Clause 8.2 in real-world scenarios, professionals can benefit from an ISO 42001 Exam Strategy Guide that helps them understand AI risk assessment frameworks and confidently approach certification requirements. Having worked with organizations preparing for ISO 42001 audits, we’ve seen how Clause 8.2‑aligned risk assessments dramatically reduce last‑minute findings in AI governance interviews.

As AI continues to redefine how organizations operate and compete, the need for structured and accountable risk management has never been more critical. Clause 8.2 of ISO 42001 risk assessment empowers organizations with a clear, systematic framework to identify, evaluate, and manage AI-related risks before they escalate into real-world consequences.

By embedding AI risk assessment, AI impact assessment, and artificial intelligence risk analysis into the core of AI initiatives, businesses move beyond experimentation to responsible innovation. This not only reduces exposure to ethical, operational, and regulatory risks but also strengthens transparency, stakeholder confidence, and long-term resilience.

In an era where AI-driven decisions directly influence people, processes, and outcomes, relying on ad-hoc risk practices is no longer sustainable. Adopting Clause 8.2 of ISO 42001 risk assessment is not just about compliance it’s about building AI systems that are reliable, accountable, and future-ready. Organizations that embrace this approach today will lead with trust, not just technology, in the AI-driven world of tomorrow.

Ready to take your AI governance and risk management expertise to the next level?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain hands-on experience in Clause 8.2 of ISO 42001 risk assessment, along with practical skills in AI risk assessment, audit practices, and compliance frameworks. Designed for AI professionals, risk managers, and governance leaders, this course equips you to confidently lead AI audits and implement responsible AI systems in real-world scenarios.

Start your ISO 42001 auditor journey today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.