Category | Quality Management

Last Updated On 26/02/2026

Artificial Intelligence is no longer sitting in innovation labs or pilot projects it is powering boardroom decisions, customer interactions, fraud detection systems, and even autonomous operations. Recent global studies show that more than 70% of enterprises are investing heavily in AI, and nearly 40% are already relying on AI for mission-critical functions. In short, AI is not an experiment anymore it is infrastructure.

But here’s the uncomfortable reality.

As AI adoption accelerates, so do the risks. AI models are being targeted by sophisticated cyberattacks. Biased algorithms are triggering public backlash and regulatory scrutiny. Governments across the world are introducing stricter AI compliance frameworks. A single flawed AI decision can now lead to financial loss, legal exposure, or irreversible reputational damage.

So the real question is no longer “Should we adopt AI?”

It is:

Is your AI system secure against evolving threats?

Is it governed responsibly and ethically?

Can you prove compliance when regulators come knocking?

In this high-stakes environment, organizations are asking a far more strategic question — one that goes beyond traditional cybersecurity:

How do ISO 42001 auditors differ on secure AI?

Understanding this difference may determine whether your AI systems become a competitive advantage or your next compliance crisis.

Is AI auditing just another IT audit? Is it only about cybersecurity? Or is it really about governance, accountability, and risk management at a much deeper level? These are the questions many organizations are asking as AI becomes central to business operations. This blog is designed for AI leaders and CTOs driving digital transformation, compliance officers responsible for regulatory alignment, risk managers overseeing enterprise exposure, ISO professionals expanding into AI governance auditing, and organizations implementing structured AI governance frameworks. If your organization is adopting AI or planning to align with ISO standards, understanding how do ISO 42001 auditors differ on secure AI? is critical for ensuring long-term sustainability, regulatory compliance, and responsible innovation. Let’s break it down step by step.

Before exploring how do ISO 42001 auditors differ on secure AI?, we need to understand what ISO/IEC 42001 actually represents.

ISO/IEC 42001 is the first international standard designed specifically for Artificial Intelligence Management Systems (AIMS). Unlike traditional ISO standards that focus on quality (ISO 9001) or information security (ISO 27001), ISO 42001 is centered on:

AI governance

Ethical AI development

Risk-based AI oversight

Accountability across the AI lifecycle

It introduces structured AI governance auditing mechanisms to ensure AI systems are trustworthy, secure, transparent, and aligned with regulatory expectations.

Secure AI under ISO 42001 does not only mean cybersecurity. It includes:

Protection against adversarial attacks

Data integrity

Bias detection and mitigation

Explainability

Continuous monitoring

This broader perspective is exactly why many organizations ask: how do ISO 42001 auditors differ on secure AI?

When asking how do ISO 42001 auditors differ on secure AI?, the first major distinction is governance orientation.

Traditional security auditors typically focus on:

Firewalls

Access control

Encryption

Infrastructure vulnerabilities

ISO 42001 auditors, however, prioritize AI governance auditing:

Who is accountable for AI decisions?

Is there documented AI risk ownership?

Are ethical guidelines embedded in AI policies?

Is AI oversight independent and transparent?

The focus shifts from “Is the system secure?” to “Is the AI system responsibly governed and controlled?”

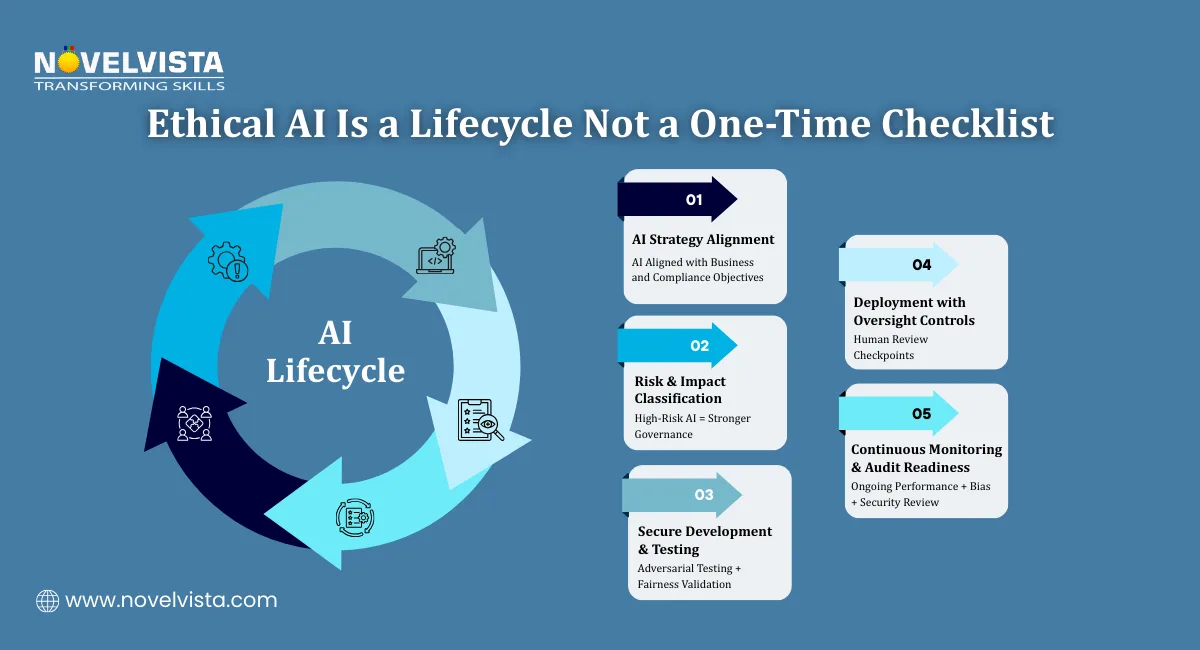

Another key answer to how do ISO 42001 auditors differ on secure AI? lies in lifecycle assessment.

ISO 42001 auditors evaluate AI systems across:

Design

Development

Testing

Deployment

Monitoring

Retirement

This lifecycle-based auditing ensures:

Secure model training environments

Data privacy compliance

Ongoing performance validation

Continuous risk reassessment

This structured lifecycle review forms the backbone of Responsible AI auditing practices.

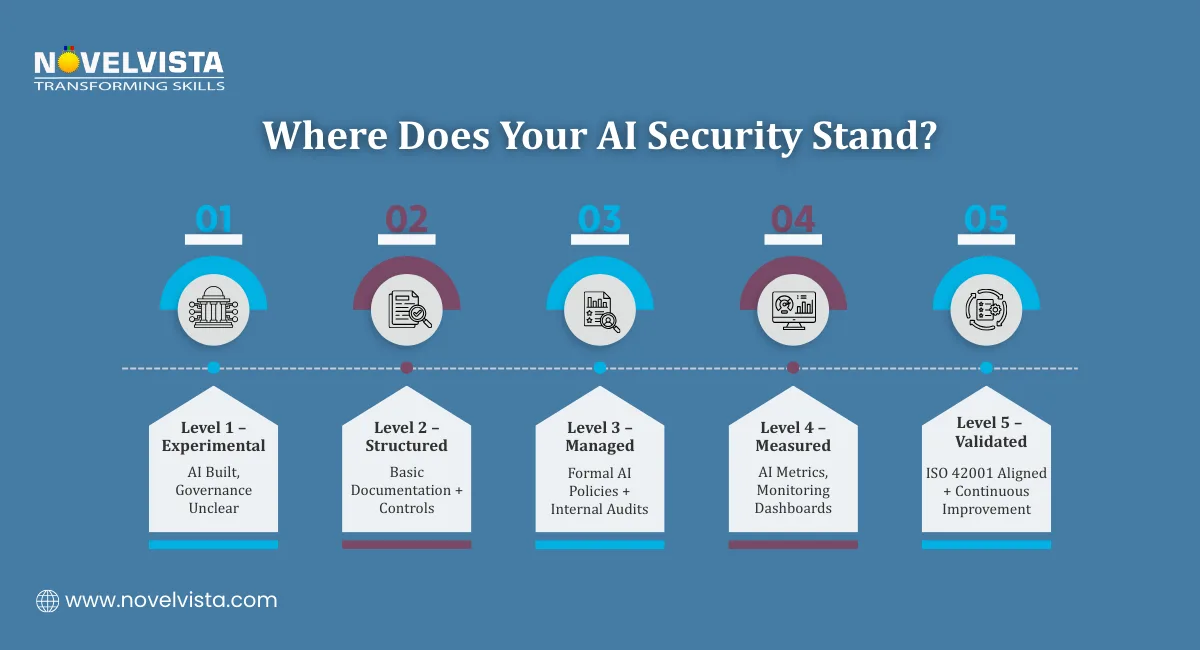

Unlike traditional audits that check compliance against predefined controls, ISO 42001 auditors conduct an AI security maturity assessment.

This includes evaluating:

AI risk identification processes

Threat modeling capabilities

Bias detection mechanisms

Incident response readiness

Organizational AI risk culture

Instead of simply marking “pass” or “fail,” auditors assess how mature the AI governance structure is.

This is a critical distinction in understanding how do ISO 42001 auditors differ on secure AI?

ISO 42001 embeds ethics into audit evaluation.

Auditors examine:

Fairness and bias mitigation

Explainability of AI outputs

Transparency in decision-making

Human oversight mechanisms

This is where Responsible AI auditing practices become central.

Secure AI is not just protected AI it is ethical, accountable, and explainable AI.

Let’s simplify the comparison

Traditional IT Audit |

ISO 42001 AI Audit |

Focus on infrastructure security |

Focus on AI governance auditing |

Checks technical controls |

Evaluates risk and lifecycle |

Compliance-based |

Risk-based and maturity-based |

Static periodic review |

Continuous AI monitoring |

IT-centric |

Cross-functional governance |

This table clearly highlights how do ISO 42001 auditors differ on secure AI?

The shift is from technical protection to holistic AI governance assurance. The ISO 42001 Exam Strategy Guide helps professionals structure their preparation effectively, focus on high-weightage topics, and approach the certification exam with greater confidence and clarity.

To further understand how do ISO 42001 auditors differ on secure AI?, let’s explore what they practically assess.

Auditors review:

AI management system documentation

Risk registers

AI ethics policies

Governance committee structures

Strong governance equals strong AI security.

AI systems are only as reliable as the data they are trained on.

ISO 42001 auditors assess:

Data lineage tracking

Bias testing processes

Data protection compliance

Consent management

This ensures both fairness and security.

Secure AI includes protection from:

Model poisoning

Adversarial attacks

Data manipulation

Unauthorized model access

Through AI security maturity assessment, auditors verify whether monitoring mechanisms are proactive rather than reactive.

ISO 42001 auditors evaluate:

AI-specific incident response plans

Root cause analysis processes

Post-incident learning

Continuous AI risk reassessment

This dynamic oversight explains again how do ISO 42001 auditors differ on secure AI?

It is never a one-time checklist. It is continuous governance. Practicing with ISO 42001 Exam Questions enables candidates to understand the exam pattern, identify key focus areas, and strengthen their readiness for the certification assessment.

To conduct effective AI governance auditing, auditors require a hybrid skill set:

AI lifecycle understanding

Risk management expertise

Cybersecurity awareness

Regulatory knowledge

Ethical AI evaluation skills

They must understand not just ISO frameworks but also machine learning fundamentals, AI risks, and compliance landscapes.

This multidisciplinary capability is another key reason how do ISO 42001 auditors differ on secure AI?

Organizations adopting AI often assume that existing ISO 27001 or traditional cybersecurity audits are enough. However, AI introduces new risk categories such as algorithmic bias, autonomous decision-making errors, model drift, regulatory non-compliance, and ethical accountability gaps. Without structured AI governance auditing, these risks can remain hidden until they cause serious impact. Understanding how do ISO 42001 auditors differ on secure AI? helps businesses prepare better documentation, strengthen AI security maturity, align with global compliance expectations, and build stakeholder trust. Today, secure AI is not just about compliance it is a true competitive advantage.

So, how do ISO 42001 auditors differ on secure AI? The difference lies in their scope, depth, and overall philosophy. Unlike traditional audits that concentrate primarily on technical safeguards, ISO 42001 auditors move far beyond basic security checks. Their focus extends to AI governance auditing, risk-based lifecycle evaluation, Responsible AI auditing practices, and a comprehensive AI security maturity assessment that measures how well an organization manages AI risks over time. Secure AI under ISO 42001 is not simply about protection, it is about accountability, transparency, ethical oversight, and continuous improvement embedded across the entire AI lifecycle. As AI becomes deeply integrated into core business decisions, organizations that embrace ISO 42001 auditing principles position themselves as leaders in trust, compliance, and innovation. The future of AI is not just intelligent, it must be secure, governed, and responsible by design.

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain practical AI governance auditing skills, real-world Artificial Intelligence Management System (AIMS) insights, and globally recognized credentials. Designed for AI leaders, compliance professionals, risk managers, and ISO practitioners, this course empowers you to confidently conduct audits, perform AI security maturity assessment, and implement Responsible AI auditing practices aligned with global standards.

Start your ISO 42001 Lead Auditor journey today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.