Category | Quality Management

Last Updated On 13/05/2026

By 2030, artificial intelligence is projected to contribute up to $15.7 trillion to the global economy. Yet despite this extraordinary growth, a striking gap remains: fewer than 30% of organizations deploying AI have any formal governance framework in place. That means the majority of businesses are operating powerful, decision-making systems without any structured accountability. What happens when an AI model produces a biased hiring recommendation? Or when an autonomous system makes a financially consequential error with no audit trail? These are not hypothetical scenarios. They are documented, real-world failures happening right now, across industries.

So here is the real question: How do organizations harness AI's potential while managing its risks in a structured, verifiable way?

The answer, increasingly, lies in ISO 42001 AI operational management. Published in 2023, ISO/IEC 42001 is the world's first internationally recognized, certifiable standard for an Artificial Intelligence Management System (AIMS). And at the heart of this standard sits Clause 8, which governs how AI systems must actually be operated, controlled, and managed day to day. Specifically, Clause 8.4 addresses the practical lifecycle of AI systems, from development through deployment to decommissioning.

This blog explains how ISO 42001 AI operational management helps organizations control, monitor, and govern AI systems responsibly. We explore Clause 8.4, AI system operational control, AI risk management, and lifecycle governance. You will also learn the key benefits and implementation steps of ISO/IEC 42001.

| Topic | Key Focus |

| Clause 8.4 | AI operational control requirements |

| AI Risk Management | Identifying and reducing AI risks |

| Lifecycle Governance | Managing AI from development to retirement |

| ISO 42001 Benefits | Compliance, trust, and responsible AI |

| Implementation | Steps to build an AI management system |

Clause 8.4 sits within the broader Clause 8 (Operation) of ISO/IEC 42001:2023 and is the operational core of the entire standard. While earlier clauses deal with planning, context-setting, and policy design, Clause 8.4 is where governance becomes actionable. It defines the specific requirements organizations must meet to control, monitor, and manage AI systems throughout their active operational life.

In plain terms, Clause 8.4 answers a critical question that most AI governance discussions overlook: once an AI system is built and deployed, how do you ensure it continues to behave as intended, remains safe, and stays aligned with organizational and ethical objectives over time?

Maintaining governance from conception through decommissioning

Crucially, Clause 8.4 requires documented evidence of all controls. Organizations cannot simply assert that their AI systems are well-managed. They must produce audit-ready records showing how controls were applied, how anomalies were detected and addressed, and how the system evolved over time. This is what makes ISO 42001 AI operational management certifiable, not just aspirational.

The standard is structured around Clause 8 (Operation), which is where the real-world application of governance principles takes place. Here is how the key components break down:

| Component | Description | Purpose |

| Operational Planning and Control | Establishing criteria for AI processes and controls | Aligns AI development with organizational goals |

| AI Risk Assessment | Identifying potential negative impacts (bias, security, ethics) | Prevents harm before deployment |

| AI Risk Treatment | Applying measures to mitigate identified risks | Reduces probability and severity of failures |

| AI System Impact Assessment | Evaluating broader effects on individuals and society | Ensures ethical and social responsibility |

| Lifecycle Management | Monitoring AI from conception to decommissioning | Maintains consistent oversight at every stage |

Each of these components feeds into the others. Risk assessment informs risk treatment. Impact assessment shapes operational controls. Lifecycle management ensures nothing falls through the cracks over time.

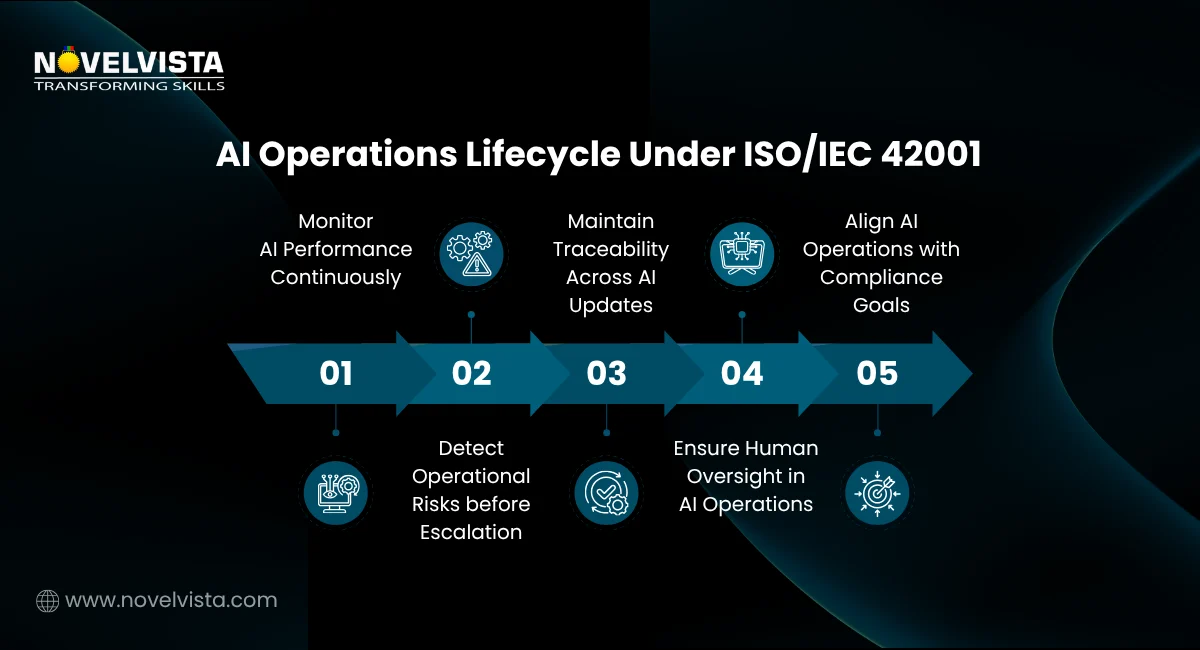

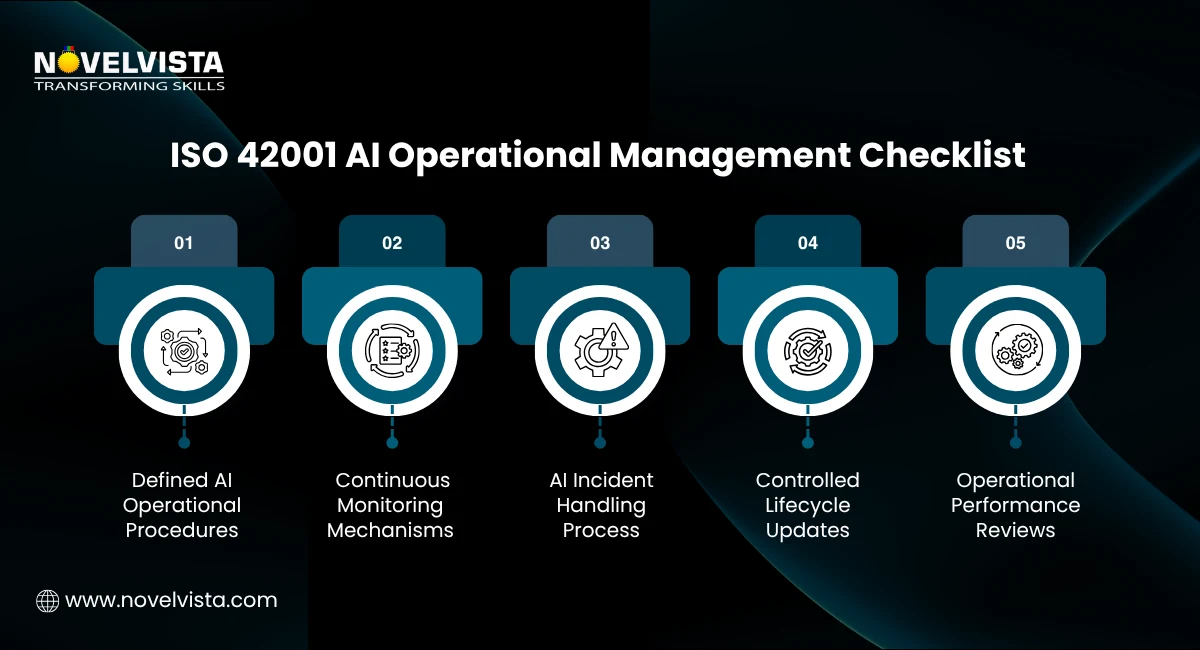

Clause 8.4 is where ISO 42001 AI operational management moves from policy into practice. It defines the specific requirements for controlling AI systems during active operation. This includes maintaining documented processes, defining performance thresholds, and ensuring that human oversight mechanisms are in place where needed.

Clause 8.4 requires organizations to establish and maintain controls ensuring AI systems behave as intended throughout their operational life. This includes:

One of the most important aspects of AI system lifecycle management under Clause 8.4 is that it covers the entire arc of an AI system, not just the deployment phase. This encompasses:

This lifecycle-wide approach distinguishes ISO 42001 from narrower technical standards that focus only on model performance.

Effective AI operations management requires a systematic approach to identifying and addressing risk. ISO 42001 divides this into two distinct but related activities.

Organizations must systematically identify potential negative impacts of their AI systems. This goes well beyond security vulnerabilities to include:

| Risk Category | Examples |

| Bias and Fairness | Discriminatory outputs in hiring, lending, or healthcare triage |

| Security | Adversarial attacks, data poisoning, model inversion |

| Ethical Concerns | Lack of transparency, manipulation of user behavior |

| Legal and Regulatory | Non-compliance with GDPR, sector-specific AI regulations |

| Operational | System drift, performance degradation over time |

Once risks are identified, they must be formally addressed. Treatment measures under ISO 42001 include data quality controls, algorithmic fairness testing, adversarial robustness measures, and the implementation of human oversight at critical decision points. Every treatment measure must be documented, assigned an owner, and reviewed on a defined schedule.

This structured approach to risk is what gives ISO 42001 AI operational management its practical value. It moves organizations away from ad hoc responses to a disciplined, evidence-based framework. Since operational governance begins with structured risk identification, understanding Clause 8.2 of ISO 42001 can provide additional insight into how organizations are expected to conduct AI risk assessments before applying operational controls under Clause 8.4.

| Benefit | Detail |

| Responsible AI | Ensures AI tools are fair, transparent, and legally compliant, including data privacy |

| Trust and Compliance | Provides a structured framework to demonstrate responsible AI practices to regulators and stakeholders |

| Broad Applicability | Suitable for any organization, large or small, that develops or uses AI |

| Continuous Improvement | Mandates ongoing monitoring and refinement of AI systems to maintain efficiency and safety |

| Competitive Advantage | Certification signals maturity and trustworthiness to clients and partners |

A common misconception is that ISO 42001 is only relevant to large technology companies. In reality, it is equally applicable to a regional hospital using AI-assisted diagnostics, a financial services firm using automated credit scoring, or a manufacturer deploying predictive maintenance systems. The framework scales to the complexity of the AI system and the size of the organization.

Identify which AI systems fall under the AIMS. Not every algorithm in your organization may require full ISO 42001 compliance. Focus initially on systems with the highest risk profiles, those making consequential decisions affecting individuals or operations.

Develop formal governance policies covering AI usage, data handling, accountability structures, and escalation processes. These policies form the backbone of your AIMS and must be reviewed regularly.

Perform regular audits, impact assessments, and risk evaluations. Establish a calendar for these activities and ensure findings are documented and acted upon. AI system impact assessment should be embedded into the development cycle, not treated as a one-time exercise.

Engage an accredited certification body to conduct an independent audit of your AIMS. Certification provides external validation of your AI operations management practices and is increasingly becoming a requirement in regulated industries and public procurement processes.

If you want a deeper understanding of the standard beyond operational controls, exploring the complete ISO 42001 Syllabus can help you understand all clauses, audit requirements, governance principles, and AI risk management concepts covered in the framework.

ISO 42001 AI operational management is not a bureaucratic checkbox. It is a strategic capability that allows organizations to deploy AI with confidence, demonstrate accountability to stakeholders, and build the kind of institutional trust that increasingly determines competitive success. Clause 8.4, in particular, provides the operational backbone of the standard, translating governance principles into concrete, auditable controls that span the full AI system lifecycle.

As AI regulation tightens globally, from the EU AI Act to emerging frameworks in Asia and North America, organizations without a formal AI management system will find themselves increasingly exposed. ISO 42001 offers a proven, internationally recognized path to structured AI operations management that protects both the organization and the people its AI systems affect.

The question is no longer whether your organization needs ISO 42001. It is how quickly you can begin.

Ready to lead responsible AI governance with confidence?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain practical expertise in AI operational management, AI risk assessment, and AI system lifecycle governance. Designed for AI leaders, compliance professionals, auditors, and governance teams, this course helps you build real-world auditing skills and confidently manage ISO 42001 compliance in modern AI-driven organizations.

Start your ISO 42001 Lead Auditor journey today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.