Category | Quality Management

Last Updated On 08/04/2026

Artificial Intelligence isn’t failing quietly; it’s failing where it matters most

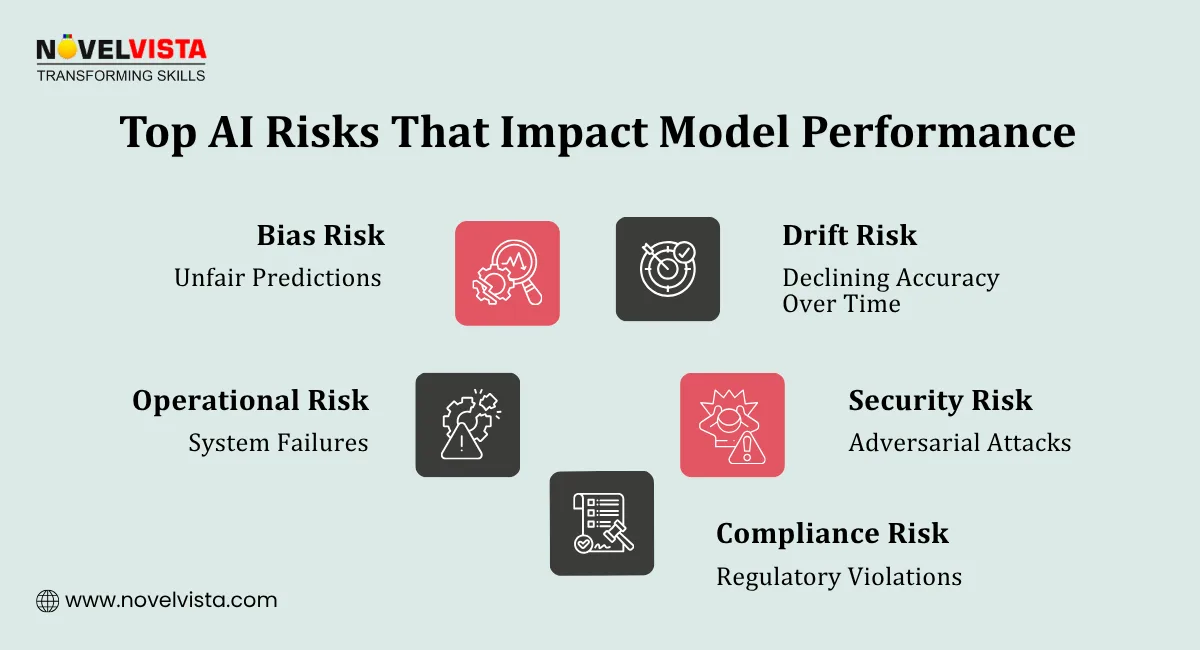

Behind polished demos and high-accuracy benchmarks, many AI systems struggle the moment they meet real-world complexity. Models that perform brilliantly in controlled environments often break under shifting data, unexpected inputs, or unseen scenarios. This isn’t just a technical gap it’s a business risk. Billions are being invested in AI, yet a significant portion of initiatives stall, underperform, or never make it to production not because AI doesn’t work, but because it isn’t robust enough

So the real question isn’t whether your model is accurate.

It’s this: how does ISO 42001 ensure model robustness in environments where failure is not an option?

This question matters more than ever if you’re:

Because in today’s AI-driven landscape, AI model robustness is what separates experimental success from operational reliability.

And robustness doesn’t happen by chance it’s engineered through discipline, governance, and continuous validation.

That’s exactly where ISO 42001 comes in.

Let’s break down how it transforms AI from “working” to working reliably every time it matters.

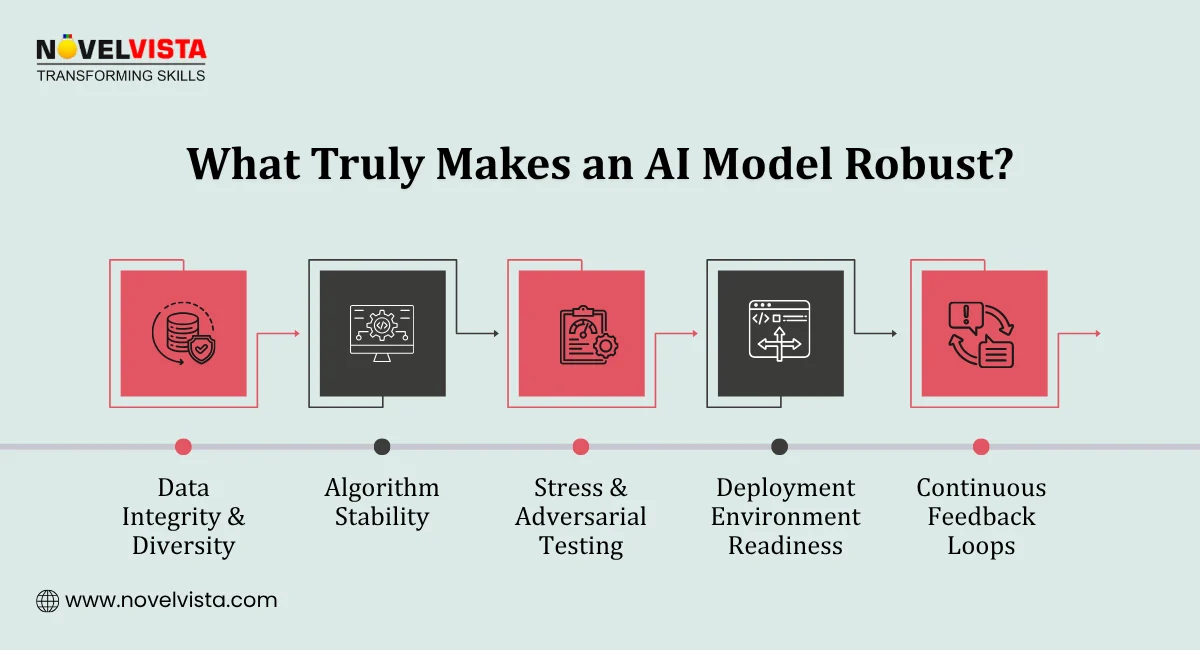

Before diving into standards, let’s understand the foundation.

AI model robustness refers to a model’s ability to:

A robust model doesn’t just work in a controlled environment it performs reliably in the real world.

Without robustness:

This is exactly where frameworks like ISO 42001 step in.

ISO 42001 is an international standard designed for AI management systems. It provides a structured framework to ensure AI systems are:

Unlike traditional IT standards, ISO 42001 focuses specifically on AI lifecycle governance, ensuring that models are built, deployed, and monitored responsibly.

So, when we ask how does ISO 42001 ensure model robustness, we’re really asking how it enforces discipline across every stage of AI development.

Let’s get to the core of the discussion.

ISO 42001 establishes clear roles and responsibilities for AI systems. This means:

This structured governance directly strengthens AI model robustness, as it minimizes errors caused by mismanagement or lack of oversight.

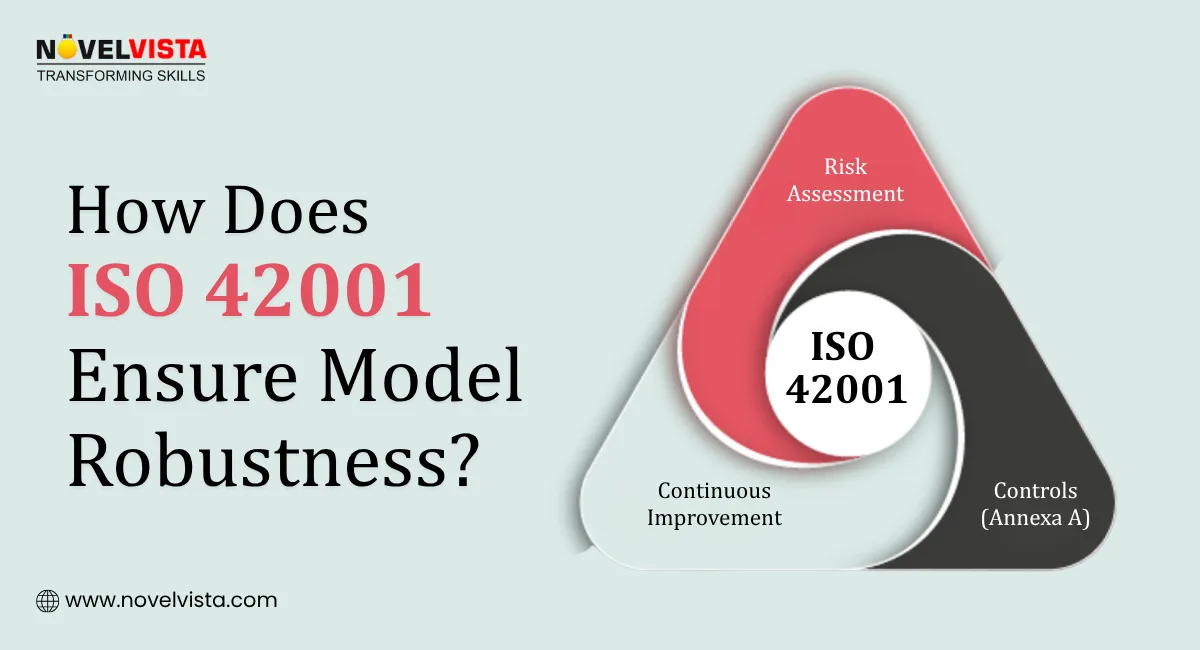

One of the key ways how does ISO 42001 ensure model robustness is through its risk-based methodology.

Organizations are required to:

This proactive approach ensures that models are not just built but stress-tested against potential failures.

Note: Under Control B.6 (AI Impact Assessment), ISO 42001 goes a step further by evaluating how AI systems affect individuals and society. This means AI model robustness isn’t measured only by technical performance or uptime, but also by its real-world impact, fairness, and potential consequences making robustness both a technical and ethical priority.

ISO 42001 doesn’t treat AI as a one-time project. Instead, it enforces full lifecycle management:

By embedding robustness checks at every stage, the standard ensures long-term reliability not just short-term accuracy.

Note: As highlighted in Control B.7, ISO 42001 requires that AI model robustness is considered and documented right from the acquisition or development phase, not after deployment. This ensures that robustness is built into the foundation of the system, rather than being treated as a reactive fix later in the lifecycle.

A major pillar of robustness is model validation and testing.

ISO 42001 mandates rigorous validation processes, including:

This ongoing model validation and testing ensures that AI systems remain stable even as environments change.

You can’t have robust models without robust data.

ISO 42001 emphasizes:

Poor data leads to poor models simple as that.

Note: Under Control B.8, ISO 42001 also addresses advanced risks like data poisoning and adversarial robustness. It requires organizations to ensure that training data is representative and secure, reducing the risk of adversarial evasion attacks where even tiny, manipulated changes in input data can cause models to fail or produce incorrect outputs. By enforcing strict data quality and security controls, the standard directly strengthens AI model robustness at its core.

Another critical answer to how does ISO 42001 ensure model robustness lies in continuous monitoring.

AI systems are dynamic they evolve over time. ISO 42001 ensures:

This creates a system where models are constantly learning and improving. An ISO 42001 Toolkit helps organizations streamline AI governance by providing ready-to-use templates, frameworks, and resources to ensure compliance and model robustness. As we shift toward Agentic AI in 2026 where models act autonomously ISO 42001’s requirement for continuous feedback loops becomes the only way to prevent 'agentic drift' and maintain system control.

Even the most advanced AI systems need human supervision.

ISO 42001 promotes:

Why does this matter?

Because Explainability isn’t just a feature it’s a critical tool for robustness. Under Control B.10, ISO 42001 emphasizes that AI decisions must be understandable and interpretable by humans. If a human cannot clearly understand why a model made a decision, they cannot verify whether the model is truly robust or simply producing a lucky guess or even hallucinating.

Human oversight powered by Explainability helps:

And ultimately, it strengthens AI model robustness by ensuring models are not only accurate but also understandable, verifiable, and trustworthy.

Implementing ISO 42001 offers several tangible benefits:

Models perform consistently across real-world scenarios. Even when faced with unpredictable inputs or changing environments, robust AI systems maintain stable and accurate outputs.

Proactive identification and mitigation of risks. Potential failures, biases, and performance issues are detected early, reducing disruptions and ensuring smoother AI operations.

Stakeholders gain confidence in AI systems. Transparent processes and consistent performance help build credibility with users, customers, and decision-makers.

Aligns with global AI governance expectations. Ensures your AI systems meet evolving legal and ethical standards, reducing compliance risks across industries.

Robust frameworks enable long-term scalability. Well-structured AI models can adapt, expand, and perform efficiently as business needs and data volumes grow.

All of these contribute to a stronger answer to the question: how does ISO 42001 ensure model robustness?

So, how does ISO 42001 ensure model robustness?

It doesn’t treat robustness as a feature it builds it into the DNA of your AI systems.

From the way models are designed and governed to how they are tested, deployed, and continuously monitored, ISO 42001 creates a system where failure points are anticipated, controlled, and minimized. It brings structure to uncertainty combining risk management, rigorous model validation and testing, high data integrity standards, and ongoing performance oversight into one cohesive framework.

Because in reality, AI doesn’t fail in theory it fails in production. And when it does, the impact isn’t just technical; it’s operational, financial, and reputational.

That’s why AI model robustness is no longer a “nice-to-have.” It’s the foundation of any AI system that people can trust.

ISO 42001 gives organizations more than guidelines it provides a repeatable, auditable approach to building AI that performs consistently under pressure, adapts to change, and stands up to real-world complexity.

If your goal is not just to build AI, but to build AI that holds up when it truly counts, then ISO 42001 isn’t optional it’s strategic.

Ready to take your AI governance expertise to the next level?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain hands-on knowledge in auditing AI systems, managing risks, and ensuring model robustness through globally recognized best practices. Designed for AI professionals, auditors, and business leaders, this course equips you with the skills to implement responsible AI frameworks and lead AI governance with confidence.

Start your ISO 42001 auditor journey today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.