Category | Quality Management

Last Updated On 25/03/2026

Artificial Intelligence is making decisions that even its creators sometimes can’t fully explain. From loan approvals to hiring recommendations, AI systems are influencing critical outcomes but when asked “why?”, many organizations don’t have a clear answer. This growing gap between AI capability and AI transparency is exactly why explainability is becoming a top priority. This lack of clarity has sparked one of the biggest challenges in modern technology: trust.

If you’ve ever wondered:

Then you’re already thinking about explainable AI.

This is where the question arises: how does ISO 42001 support explainable AI? And more importantly, how can organizations use it to build transparent, ethical, and trustworthy AI systems?

Whether you're an AI engineer, compliance officer, business leader, or IT professional, this guide will help you understand how ISO 42001 enables a strong Explainable AI framework while embedding Responsible AI practices and robust AI governance controls.

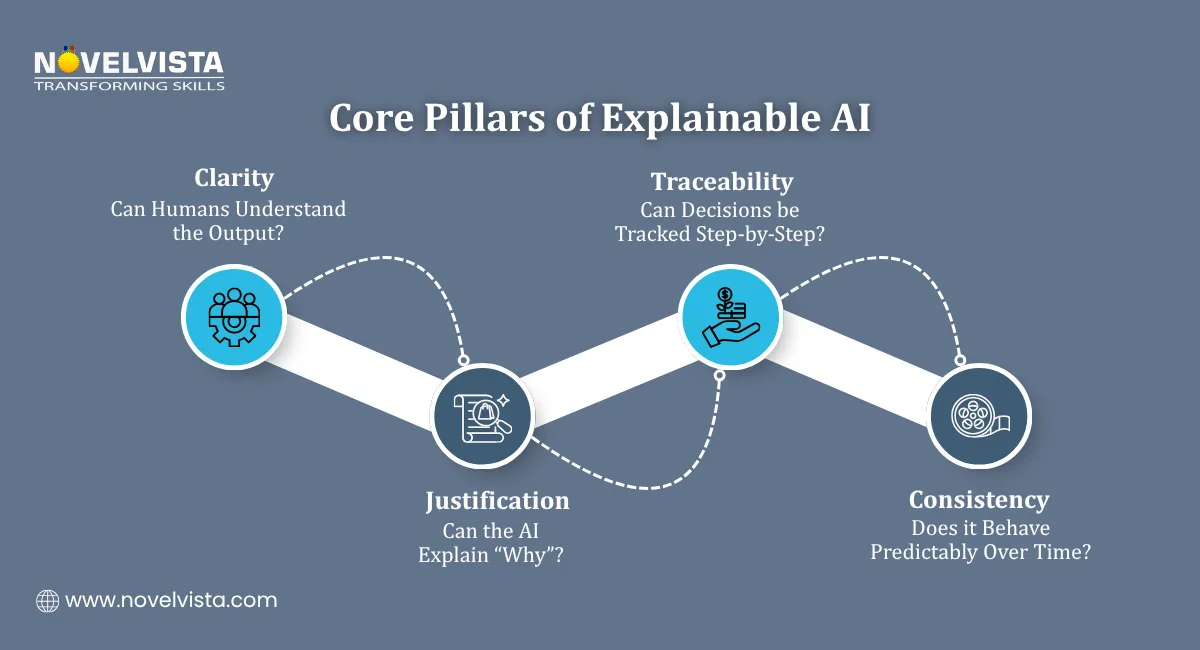

Explainable AI (XAI) refers to systems where the decisions and outputs of AI models can be clearly understood, interpreted, and trusted by humans. In practice, an Explainable AI framework ensures that AI decisions are transparent, outcomes can be justified with clear reasoning, and stakeholders can confidently trust the system and its results.

Without explainability:

For example, imagine an AI system rejecting a loan application. If it cannot explain why, it creates legal, ethical, and reputational risks.

This is why organizations are increasingly focusing on AI explainability requirements as part of their governance strategies.

ISO 42001 is the first international standard designed specifically for Artificial Intelligence Management Systems (AIMS). It provides a structured approach to managing AI responsibly.

At its core, ISO 42001 focuses on:

It aligns closely with Responsible AI practices, ensuring AI systems are:

So, when asking how does ISO 42001 support explainable AI, the answer lies in its ability to integrate explainability directly into AI governance processes.

Let’s directly address the core question: how does ISO 42001 support explainable AI? ISO 42001 supports explainable AI by embedding transparency, documentation, governance, and accountability into every stage of the AI lifecycle. Rather than treating explainability as an afterthought, it establishes it as a mandatory design principle, ensuring that AI systems are built with clarity and trust from the ground up.

Here’s how it achieves that:

One of the strongest ways how does ISO 42001 support explainable AI is through well-defined AI governance controls.

These controls ensure:

With governance in place, organizations can:

This directly strengthens the Explainable AI framework by making systems more transparent and traceable.

ISO 42001 explicitly encourages organizations to define and document AI explainability requirements.

This includes:

By doing so, organizations ensure that:

This is a key reason why how does ISO 42001 support explainable AI becomes a critical question for compliance-driven industries.

Transparency is at the heart of both ISO 42001 and explainable AI.

ISO 42001 requires organizations to:

This supports Responsible AI practices by:

Transparency transforms AI from a “mystery tool” into a trusted decision-making partner.

Another important aspect of how does ISO 42001 support explainable AI is its focus on risk management.

ISO 42001 mandates:

Explainability plays a crucial role here:

By integrating explainability into risk management, organizations strengthen both AI governance controls and ethical AI outcomes.

Explainability isn’t a one-time effort it’s an ongoing process.

ISO 42001 ensures:

This lifecycle approach ensures that:

This continuous improvement loop is essential to sustaining a strong Explainable AI framework. Practicing ISO 42001 Exam Questions is essential to understand AI governance concepts, audit requirements, and real-world implementation scenarios effectively.

Implementing ISO 42001 brings several advantages when it comes to explainability:

Enhanced Trust

Users and stakeholders are more likely to trust AI systems that are transparent and explainable. When decisions are clearly understood, it builds confidence and encourages wider adoption across the organization.

Regulatory Compliance

With increasing global regulations, meeting AI explainability requirements becomes easier. Organizations can demonstrate accountability and align with compliance standards without facing legal or operational risks.

Better Decision-Making

Explainable systems provide insights that help businesses make informed decisions. By understanding how outcomes are generated, teams can act with greater clarity and strategic confidence.

Risk Reduction

Clear explanations help identify and mitigate risks early. This makes it easier to detect biases, errors, or unexpected behaviors before they impact business outcomes.

Stronger AI Governance

Robust AI governance controls ensure accountability and consistency. They create a structured approach to managing AI systems while supporting transparency and responsible decision-making.

If you’re wondering whether this applies to you, here’s a quick breakdown.

ISO 42001 is ideal for:

Anyone asking how does ISO 42001 support explainable AI is likely dealing with systems where trust, transparency, and compliance are critical.

While the benefits are significant, organizations may face challenges such as:

Advanced models like deep learning can be difficult to explain.

Teams may not have experience with explainability techniques.

Highly accurate models are not always easily interpretable.

Implementing governance frameworks requires time and investment.

However, ISO 42001 provides structured guidance to overcome these barriers gradually.

So, how does ISO 42001 support explainable AI?

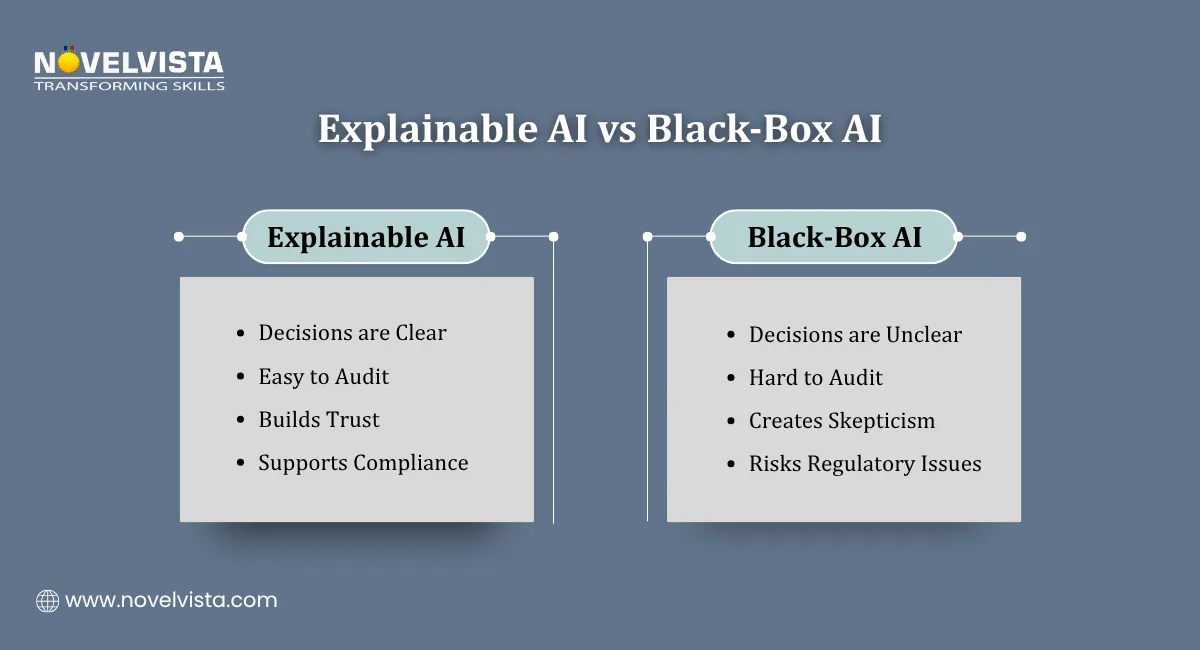

It does far more than provide guidance it sets a clear foundation where explainability becomes a built-in requirement, not an afterthought. By embedding transparency, accountability, and governance into every stage of the AI lifecycle, ISO 42001 ensures that AI systems are not only powerful but also understandable, auditable, and trustworthy.When organizations align with an Explainable AI framework, adopt Responsible AI practices, and implement strong AI governance controls, they move beyond risky black-box models to systems that inspire confidence, meet AI explainability requirements, and stand up to regulatory scrutiny. An ISO 42001 Salary Guide can help professionals understand earning potential, role-based pay trends, and career growth opportunities in AI governance and auditing.

In a world where AI decisions increasingly impact real lives and critical business outcomes, explainability is no longer optional it’s a business imperative. ISO 42001 doesn’t just support this shift; it enables organizations to lead it with clarity, responsibility, and trust.

Ready to take your expertise in AI governance and explainability to the next level?

Join NovelVista’s ISO/IEC 42001 Lead Auditor Certification Training and gain hands-on knowledge of AI governance frameworks, AI explainability requirements, and real-world auditing practices. Designed for professionals working with AI systems, this course equips you with the skills to implement Responsible AI practices, strengthen AI governance controls, and confidently audit AI management systems.

Start your journey toward building transparent, trustworthy, and compliant AI systems today!

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.