Category | News

Last Updated On 04/02/2026

AI is growing at a pace that traditional infrastructure was never designed to handle. And the strain is starting to show.

Across the world, Earth-based data centers are hitting hard limits:

In many regions, new data center projects are delayed or blocked, not because the technology isn’t needed, but because the physical footprint is becoming politically and environmentally uncomfortable.

This is the backdrop that makes one idea suddenly sound less crazy than it once did: AI data centers in space.

At the January 2026 World Economic Forum in Davos, Elon Musk made a bold claim. He said that space could become the lowest-cost location for AI data centers within the next two to three years.

That statement shocked some people. But once you understand the pressures building on Earth, the conversation changes from “Is this unrealistic?” to “Why are we running out of options down here?

Musk’s argument for AI data centers in space isn’t about spectacle. It’s about solving two problems that dominate AI infrastructure costs: power and cooling.

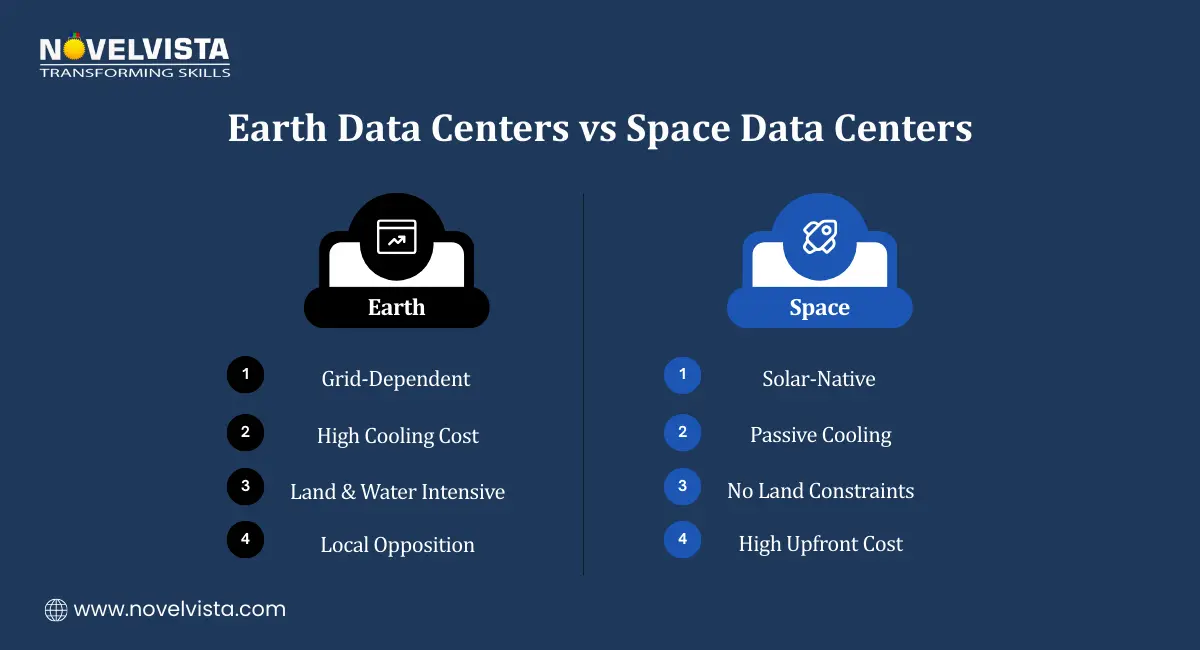

Space offers something Earth never can:

For AI systems that need to run 24/7, this kind of uninterrupted power is extremely attractive, especially compared to struggling terrestrial grids.

Cooling is one of the most expensive parts of running AI infrastructure on Earth. Servers generate massive heat, and removing it requires:

In space, heat can radiate directly into the vacuum. No chillers. No cooling towers. No water usage.

Space-based infrastructure avoids:

From Musk’s point of view, space removes the biggest blockers to AI scale. Power and cooling stop being constraints, and that alone makes the idea worth exploring.

This idea didn’t appear out of thin air.

In late January 2026, Reuters reported a proposed merger between SpaceX and xAI. The goal was clear: accelerate the development of orbital AI infrastructure.

Here’s why that matters:

This combination, launch capability, orbital experience, and capital, means space-based AI is no longer limited to slow, government-led programs. It can move at startup speed

Forget the idea of a giant floating server warehouse. That’s not realistic.

Instead, space-based AI data centers would likely look like this:

One major difference:

Heat simply radiates away. On Earth, cooling can consume a huge percentage of operating costs. In space, that cost effectively disappears.

This doesn’t eliminate all challenges, but it removes one of the most expensive and controversial ones.

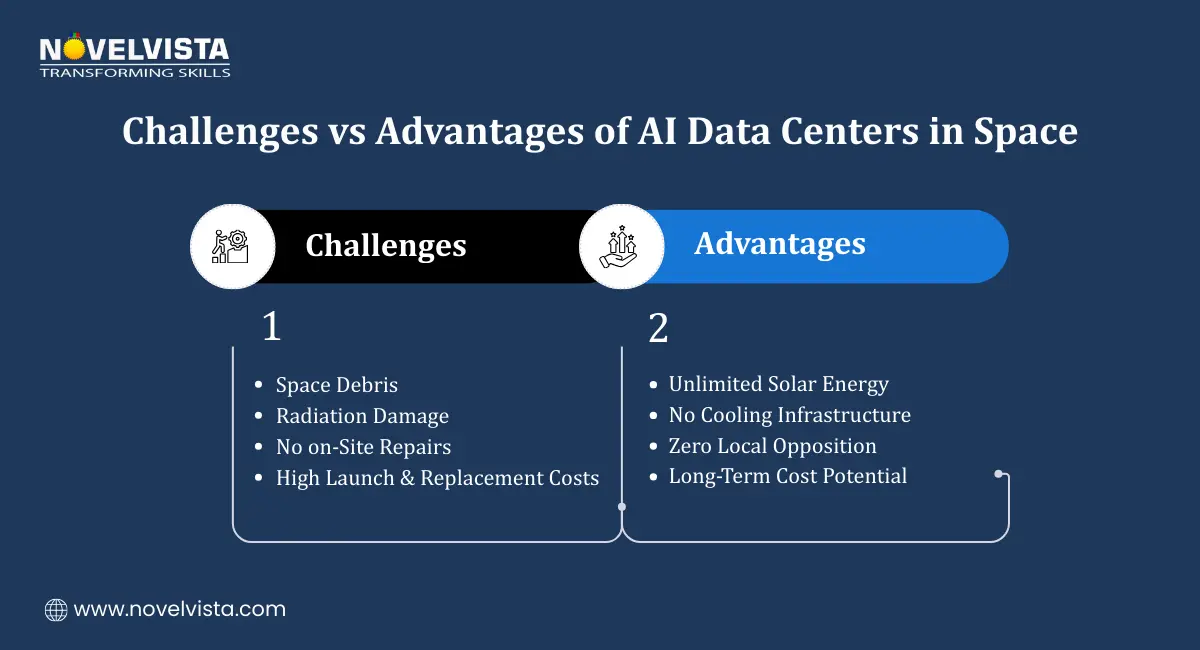

The benefits are real, but so are the risks.

Because of these challenges, most experts agree on one thing: space-based AI data centers won’t replace Earth-based ones anytime soon.

But they don’t have to.

They only need to complement Earth infrastructure in high-demand scenarios to reshape how AI scales globally.

Elon Musk may be the loudest voice, but he’s far from alone. Several major players are quietly working toward space-based data centers, each with their own timelines and strategies.

Blue Origin believes orbital data centers are feasible in the 10–20 year window. The focus is long-term:

The thinking is simple: if data centers are consuming more power than cities, maybe they shouldn’t be on Earth forever.

Starcloud has already moved from theory to testing.

Their long-term vision is bold: a 5-gigawatt AI “hypercluster” in space, equivalent to several hyperscale Earth data centers combined.

Google is exploring orbital AI through Project Suncatcher, in partnership with Planet Labs:

This isn’t about replacing Google Cloud, but about extending it beyond Earth.

China is treating this as national infrastructure.

This makes orbital AI a geopolitical issue, not just a technical one.

This sudden interest didn’t come out of nowhere. Earth-based AI infrastructure is under real pressure.

A real-world example is xAI’s Colossus supercomputer in Memphis, which has faced energy and infrastructure constraints as it scales.

As global AI demand accelerates, space starts to look like a pressure valve, an alternative path when Earth can’t keep up fast enough.

The answer is somewhere in the middle.

This isn’t an overnight shift. It’s a slow expansion of where AI can live.

If AI starts running across Earth and orbit, the skill set changes.

Professionals will need to understand:

The future of AI isn’t just about bigger models. It’s about where and how those models run.

As AI systems become more autonomous and more distributed, professionals who understand both models and infrastructure will lead.

The NovelVista Generative AI Professional Certification helps professionals:

Ideal for:

This Agentic AI Certification becomes critical as AI systems turn:

It covers:

These skills matter when AI stops living in one data center and starts living everywhere.

Understand which skills truly matter as AI reshapes work. Identify gaps, balance technical and human capabilities, and plan smarter upskilling, without chasing hype.

Elon Musk’s vision of AI data centers in space may sound radical today. But so did cloud computing, global CDNs, and hyperscale data centers once.

With Big Tech, governments, and AI leaders investing heavily, orbital AI is no longer theoretical. It’s an emerging layer of the global compute stack.

The professionals who understand generative AI, agentic systems, and infrastructure realities won’t just adapt to this future.

They’ll help build it.

Author Details

Course Related To This blog

Generative AI Professional

Agentic AI Certification

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.