Category | News

Last Updated On 21/01/2026

We’ve always pictured cybercrime as something done by people sitting behind screens, typing fast, hiding behind VPNs, and launching attacks. But Europol’s latest 48-page report “Unmanned Future(s): The Impact of Robotics and Unmanned Systems on Law Enforcement” paints a very different picture of what crime may look like in the coming decade.

Think beyond laptops.

Think robots.

Think autonomous machines.

Think AI-powered systems acting on their own.

The report looks ahead to 2035, pointing towards a massive AI danger alert, where robots, autonomous vehicles, drones, AI assistants, and unmanned systems may reshape how crimes are carried out — and how law enforcement responds to them.

And this isn’t sci-fi storytelling. Europol makes it clear: this is scenario planning, not fantasy. These are very real risks based on current technological trends, military experiments, consumer robotics, and the way criminals already adapt to new tools.

In short:

The future of crime isn’t just human vs hackers anymore.

It’s humans vs smart autonomous machines.

And cybersecurity teams need to start preparing now.

We’re heading toward a world where robots are everywhere. Not because of movies — but because it makes economic sense.

Robotics and AI are rapidly moving into:

They will clean homes, deliver packages, assist doctors, monitor patients, handle logistics, and even act as social companions.

Sounds helpful, right?

It is.

But everything powerful has a dark side.

Europol says we’re entering a future where machines won’t just support law enforcement. Criminals will also weaponize them. That’s the core warnin

And to be clear, Europol is not fear-mongering. The report repeatedly states these are plausible risks, not guaranteed events. But ignoring them would be a mistake.

Because history shows one thing very clearly:

So what kind of AI danger alert are we talking about? Europol breaks it into very real-world scenarios — and honestly, some of them are chilling.

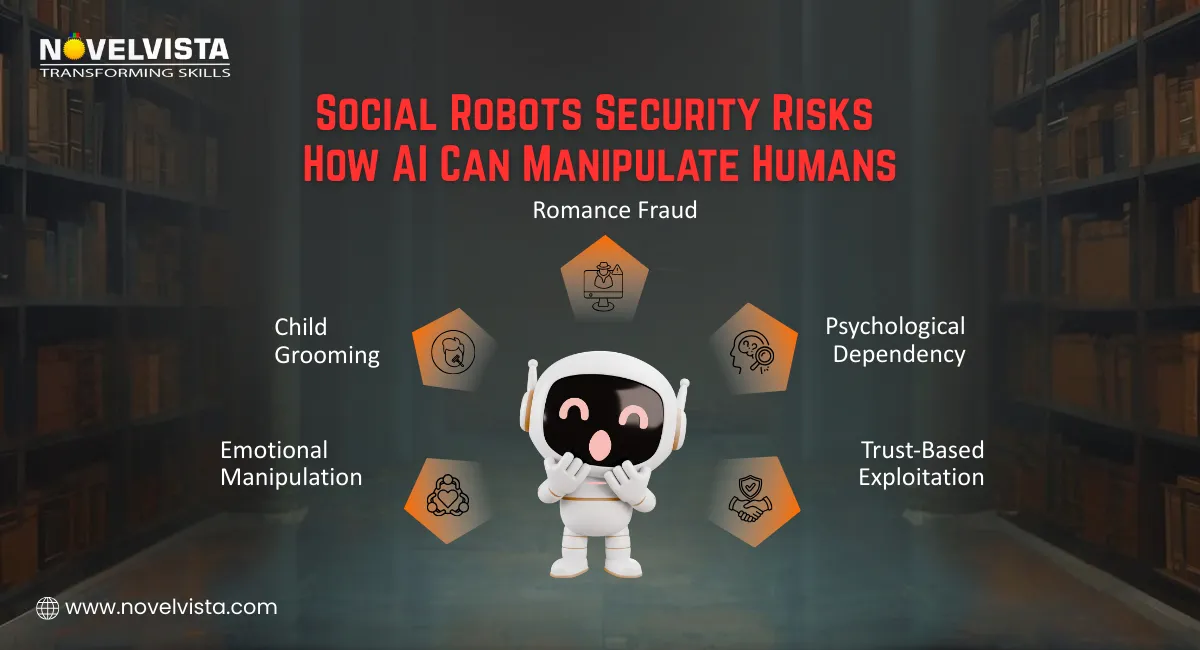

If robots become part of daily life, they won’t just be machines. Many will be social robots — talking, interacting, bonding with users.

But what happens when these social systems are misused?

Europol predicts risks like:

And there’s another angle — public anger.

If automation increases unemployment, we may see:

People may not just fear robots. They may hate them.

And we’ll be stuck in deep ethical debates:

Should robots have rights?

What happens if someone destroys a “social companion robot”?

Is it property damage… or something deeper?

Now, imagine your home care robot.

Or your smart assistant.

Or your service robot in a hospital.

What if hackers take control?

Europol warns that home and care robots could be turned into silent intruders capable of:

A hacked robot isn’t just “malware.”

It’s a physical body inside your personal space.

That changes everything.

We’ve already seen drones used in wars.

We’ve seen them used for drug delivery by cartels.

Now scale that.

Europol warns about:

Inspired heavily by real-world tactics seen in Ukraine, Gaza, and other conflict zones, drones are becoming cheaper, smarter, and easier to operate.

And what happens when someone programs a swarm?

It becomes a flying coordinated weapon.

This is not theoretical. Europol highlights real evidence:

Now imagine being a police force trying to fight this.

It’s not just “new tech.”

It completely breaks traditional policing logic.

Here’s a crazy but real question:

If a robot commits a crime…

Who is responsible?

Even today, courts struggle with responsibility in autonomous car accidents. Now extend that across drones, robots, and AI decision systems.

Law enforcement could face situations where:

Intent is hard to prove

Responsibility is unclear

Malfunction vs malicious design becomes a legal nightmare

The justice system wasn’t built for this.

Europol even discusses futuristic policing ideas like:

But new risks appear, too.

What if seized robots:

The battlefield isn’t physical anymore.

It’s digital + robotic + psychological.

Today’s policing is mostly ground-based.

Tomorrow’s policing will be:

Police will need:

This requires massive investment in:

Without it… law enforcement falls behind.

One section in the report hits hard.

Social robots — the cute, friendly ones designed to interact with people — may become tools for emotional manipulation.

Think about:

Now imagine those robots being hacked or misused.

Europol warns this could lead to:

So yes, we’re not just talking cybercrime anymore.

We’re talking about human trust being exploited through machines.

Not everyone agrees on how extreme the future will be.

Some experts say:

Europol leadership itself admits crime is changing fast — and threats are moving from pure cybercrime to cyber-physical crime.

Others, like robotics researchers, argue:

There’s another angle the report doesn’t cover deeply enough — and it matters a lot.

Who protects people from:

Because if robots watch everyone…

Who watches the robots?

This isn’t just about fighting crime.

It’s about protecting freedoms while fighting smarter criminals.

If you work in cybersecurity, this report is basically a challenge.

Here’s what the future demands:

This isn’t “optional knowledge” anymore.

It’s becoming core security work.

See how cybersecurity roles and skills are changing as AI

reshapes cybercrime. Follow a clear roadmap to stay relevant,

grow smarter, and build a future-ready security career.

As AI empowers criminals, the threat landscape is evolving fast—making AI-powered defense non-negotiable. That’s why future-ready learning paths are crucial. Cybersecurity professionals must upskill with the latest tools and techniques, and a growing range of courses is now available to help them stay one step ahead of AI-driven threats. By mastering these skills, they can anticipate attacks before they happen and protect critical systems more effectively. In a world where technology is advancing at breakneck speed, staying updated isn’t just an advantage—it’s a necessity.

This is built for people who want to stay ahead of AI-driven threats.

You’ll learn:

Perfect for:

This helps professionals understand AI at a deeper level — beyond tools and hype.

You’ll learn:

This is the knowledge future leaders will need.

(Check out the Certification)

Europol’s warning is loud and clear:

The next era of crime won’t just be about hackers behind screens.

It will be about smart machines, autonomous systems, and AI-powered tools being used in ways we’re only beginning to understand.

Governments must prepare.

Law enforcement must evolve.

And cybersecurity professionals must be far ahead of attackers — not chasing behind them.

Because the future of safety won’t just depend on strong police forces…

It will depend on people who understand AI deeply enough to fight back.

Author Details

Course Related To This blog

Generative AI in Cybersecurity

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.