Category | DevOps

Last Updated On 14/05/2025

Nowadays each organization is turning into a software company, and there is a lot of stuff going around causing software development to happen at record speeds.

In the present cloud market, there are numerous DevOps instruments and approaches that are arising each day. Individuals have such countless alternatives to look over that competition has arrived at its pinnacle, which in turn has squeezed these software firms to constantly deliver products and services even better than their competitors.

As the cloud approach is strongly acquiring prominence, numerous organizations are beginning to accept cloud practices and ideas like containerization, which means DevOps tools like Docker are sought after. In this article, we will understand what exactly is Docker and see a few concepts related to Docker that are helpful for developers and architects.

Docker is an open platform for developing, shipping, and running applications. Docker enables you to separate your applications from your infrastructure so you can deliver software quickly. With Docker, you can manage your infrastructure in the same ways you manage your applications. By taking advantage of Docker’s methodologies for shipping, testing, and deploying code quickly, you can significantly reduce the delay between writing code and running it in production.

Docker provides the ability to package and run an application in a loosely isolated environment called a container. The isolation and security allow you to run many containers simultaneously on a given host. Containers are lightweight and contain everything needed to run the application, so you do not need to rely on what is currently installed on the host. You can easily share containers while you work, and be sure that everyone you share with gets the same container that works in the same way.

Docker provides tooling and a platform to manage the lifecycle of your containers:

Fast, consistent delivery of your applications

Docker streamlines the development lifecycle by allowing developers to work in standardized environments using local containers which provide your applications and services. Containers are great for continuous integration and continuous delivery (CI/CD) workflows.

Consider the following example scenario:

Responsive deployment and scaling

Docker’s container-based platform allows for highly portable workloads. Docker containers can run on a developer’s local laptop, on physical or virtual machines in a data center, on cloud providers, or in a mixture of environments.

Docker’s portability and lightweight nature also make it easy to dynamically manage workloads, scaling up or tearing down applications and services as business needs dictate, in near real-time.

Running more workloads on the same hardware

Docker is lightweight and fast. It provides a viable, cost-effective alternative to hypervisor-based virtual machines, so you can use more of your compute capacity to achieve your business goals. Docker is perfect for high-density environments and for small and medium deployments where you need to do more with fewer resources.

If you have ever spent time in the software development field, you struck with the concept of Docker and wondered what is Docker, and why is everyone talking about it? Docker is an open-source platform that allows developers to automate the execution of applications inside lightweight, transporatble containers. These containers include everything an app needs—code, system tools, libraries, and settings—to run constantly across environments.

The reason Docker is so popular lies in its simplicity and speed. Compared to traditional virtual machines, Docker containers start up quickly and use fewer resources. Developers can spin up environments in seconds, test their apps, and split everything down—without impacting the host system.

Docker allows teams to streamline development, testing, and deployment. Its seamless integration with DevOps tools makes it a core part of modern CI/CD pipelines. Because of its constant behavior across different environments, Docker also reduces the “it works on my machine” dilemma, providing faster go-to-market cycles and improved collaboration between dev and ops teams.

Docker uses a client-server architecture. The Docker client talks to the Docker daemon , which does the heavy lifting of building, running, and distributing your Docker containers. The Docker client and daemon can run on the same system, or you can connect a Docker client to a remote Docker daemon. The Docker client and daemon communicate using a REST API, over UNIX sockets, or a network interface. Another Docker client is Docker Compose, which lets you work with applications consisting of a set of containers.

The Docker daemon

The Docker daemon (dockerd) listens for Docker API requests and manages Docker objects such as images, containers, networks, and volumes. A daemon can also communicate with other daemons to manage Docker services.

The Docker client

The Docker client (docker) is the primary way that many Docker users interact with Docker. When you use commands such as docker run , the client sends these commands to dockerd , which carries them out. The docker command uses the Docker API. The Docker client can communicate with more than one daemon.

Docker registries

A Docker registry stores Docker images. Docker Hub is a public registry that anyone can use, and Docker is configured to look for images on Docker Hub by default. You can even run your private registry.

When you use the docker pull or docker run commands, the required images are pulled from your configured registry. When you use the docker push command, your image is pushed to your configured registry.

Docker objects

When you use Docker, you are creating and using images, containers, networks, volumes, plugins, and other objects. This section is a brief overview of some of those objects.

IMAGES

An image is a read-only template with instructions for creating a Docker container. Often, an image is based on another image, with some additional customization. For example, you may build an image that is based on the ubuntu image but installs the Apache web server and your application, as well as the configuration details needed to make your application run.

You might create your images or you might only use those created by others and published in a registry. To build your image, you create a Dockerfile with a simple syntax for defining the steps needed to create the image and run it. Each instruction in a Dockerfile creates a layer in the image. When you change the Dockerfile and rebuild the image, only those layers which have changed are rebuilt. This is part of what makes images so lightweight, small, and fast when compared to other virtualization technologies.

CONTAINERS

A container is a runnable instance of an image. You can create, start, stop, move, or delete a container using the Docker API or CLI. You can connect a container to one or more networks, attach storage to it, or even create a new image based on its current state.

By default, a container is relatively well isolated from other containers and its host machine. You can control how isolated a container’s network, storage, or other underlying subsystems are from other containers or the host machine.

A container is defined by its image as well as any configuration options you provide to it when you create or start it. When a container is removed, any changes to its state that are not stored in persistent storage disappear.

Example docker run command

The following command runs an Ubuntu container, attaches interactively to your local command-line session, and runs /bin/bash.

$ docker run -i -t ubuntu /bin/bash

When you run this command, the following happens (assuming you are using the default registry configuration):

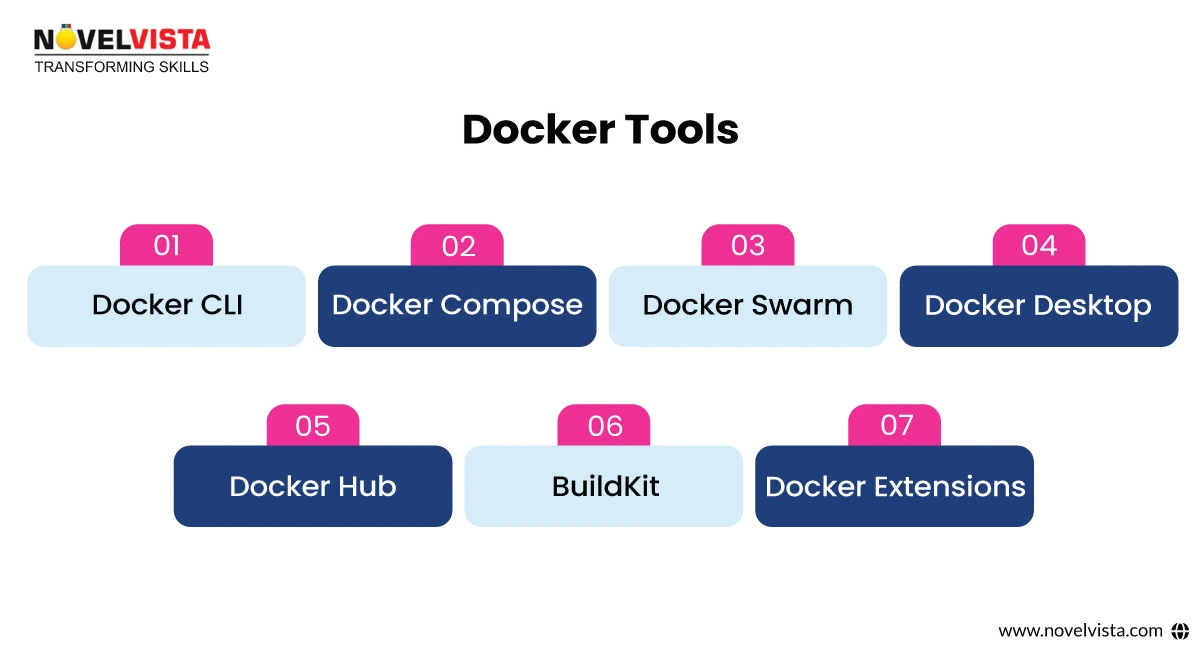

The power of Docker does not just depend on containers but on the rich ecosystem that surrounds them. Here's a quick look at important tools that make Docker even more effective:

With this strong collection of tools, developers can go from a Dockerfile to production delivery with minimal effort.

As with any strong technology, security is one of the most important things to be taken care of. Below are some best practices to keep your Dockerized environments safeguarded and production-ready:

1. Use fewer Base Images: Starting with lightweight base images like Alpine minimizes the attack surface. Avoid too much images that contain unused tools or libraries.

2. Apply Least Benefit Access: Avoid running containers as the highest-level user. Set up a non-root user in your Dockerfile using the USER command.

3. Frequently Scan Images: Use tools like Docker Scan, Trivy, or Snyk to scan your images for detected weaknesses. Automate these checks in your CI/CD workflow.

4. Use Verified Images: Only use trusted and verified images from Docker Hub. Allow Docker Content Trust (DCT) to make sure that images are signed and verified before deployment.

5. Limit Capabilities: Use flags like --cap-drop and --cap-add to grant only the required permissions. Also, consider

making your container’s file system read-only.

6. Network Isolation: Separate container networks to minimize parallel movement in case of a gap. Only reveal ports that are absolutely necessary.

7. Update Docker Regularly: Keep your Docker Engine, CLI, and associated tools up to date. Many gaps are fixed in newer releases.

By applying these best practices, you make sure that your containers are not only effective but also safeguarded and compliant.

Docker is not just a developer’s favorite—it’s being used all around industries to solve real-world challenges. Let’s look at some remarkable examples.

1. Financial Sector – Capital One

Capital One used Docker to shift to a microservices system. The result? Rapid delivery cycles and improved compliance. Docker supported simplifying their CI/CD pipeline while improving security posture.

2. E-commerce – Shopify

Shopify uses Docker containers to create separate test environments for each feature branch. This helps avoid bugs from leaking into production and makes it easier to debug and test specific components without disturbing the whole system.

3. Healthcare – Philips

Philips uses Docker for its healthcare applications to maintain HIPAA compliance. Docker’s isolation system helps them develop and deliver safeguarded applications across geographies while ensuring regulatory standards are met.

4. Media – Spotify

Spotify employs Docker to run hundreds of microservices. Developers use Docker to create production-like surrounding locally, minimizing the gap between development and deployment.

5. EdTech – Coursera

Coursera uses Docker to manage workloads like video running, data analysis, and course delivery. Docker supports them to scale effectively at the same time maintaining performance and run time across regions.

These real-world use cases show just how adaptable Docker can be—from fintech to education.

Docker has transformed the way developers build, ship, and run applications. Whether you're exploring what is Docker for the first time or you're delivering hundreds of services in production, Docker provides unmatched flexibility, scalability, and speed.

Want to dive deeper into the world of containers and DevOps? Enroll in the Certified DevOps Practitioner Training & Certification from NovelVista to master Docker, Kubernetes, CI/CD, and more with hands-on labs and real-world scenarios.

Exploring the Building Blocks of Docker for Efficient Containerization

Author Details

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.