Category | News

Last Updated On 27/01/2026

There was a time when using AI meant carefully typing the perfect prompt. You had to explain everything, context, background, preferences, almost like talking to a stranger who knew nothing about you. That phase is quietly ending.

In mid-January 2026, Google rolled out something very different: Personal Intelligence, launched in beta on January 13–14, 2026. It’s available inside the Gemini app and AI Mode in Search, initially for Google AI Pro and Ultra subscribers using personal US accounts, with access rolling out gradually.

The big shift isn’t a new chatbot feature. It’s a mindset change.

Google’s AI is moving from simply answering questions to understanding context across your digital life, often without you spelling everything out. It’s less about what you ask in the moment, and more about what your data already says about you.

That’s what makes this moment feel different.

Let’s be honest: Google has known a lot about us for years.

Think about how much of daily life runs through Google:

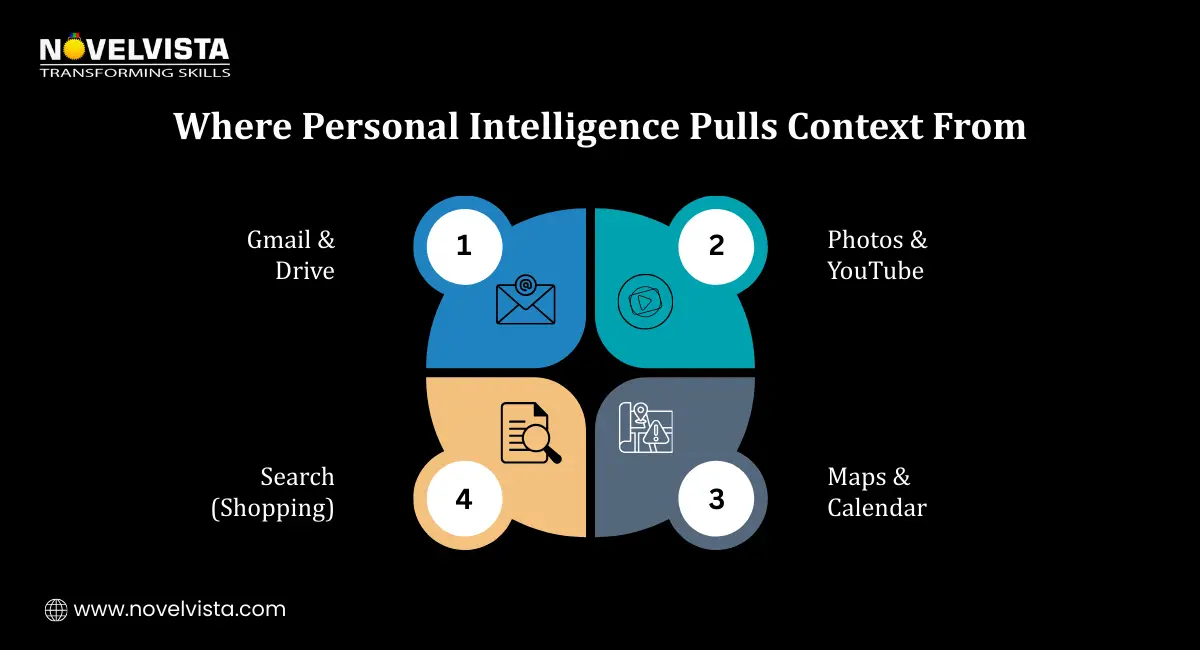

The difference is that, until now, all this data mostly lived in separate silos. Gmail knew one thing. Maps knew another. Photos stayed visual. Search worked moment by moment.

Personal Intelligence changes that.

Instead of treating these services as isolated sources, Google’s AI can now reason across them together. It doesn’t just respond to a query, it understands patterns, habits, and constraints that already exist in your data.

This marks a shift from reactive AI (“tell me what you want”) to proactive, context-aware intelligence (“I already understand what you’re likely to need”).

And that’s where things start to feel both powerful and a little unsettling.

The term sounds fancy, but the idea behind it is surprisingly simple.

Personal Intelligence is AI that:

It doesn’t just remember what you typed last week. It understands years of signals.

This is where Google’s approach differs from tools like ChatGPT or Claude. Those systems mostly rely on conversation history and what you explicitly share during chats. Google, on the other hand, can draw from long-term, real-world data you’ve already generated across its ecosystem.

The result feels less like chatting with a bot and more like talking to someone who already knows your background.

This is where the concept stops being abstract.

Imagine you’re planning a family trip. Instead of asking dozens of follow-up questions, Personal Intelligence can suggest ideas that already fit your situation.

For example:

In practical moments, it gets even simpler.

You can ask for your license plate number, and it pulls it from Photos.

You can ask about your car insurance renewal, and it finds the details from an old AAA email.

The point isn’t speed. It’s fewer prompts and more relevant answers, because the AI already understands the context.

To make this work, Personal Intelligence draws from multiple data sources you already use, including:

This data is used to:

This is Google’s biggest advantage in the AI race: context at scale. No other AI company has this depth of structured, long-term personal data already connected to everyday life.

And that advantage changes the rules of what AI can do next.

At this point, most people pause and think: Okay… but how safe is all this?

Google seems to know that concern is unavoidable, which is why it’s been unusually clear about how Personal Intelligence works behind the scenes. First, it’s important to know that Personal Intelligence is off by default. Nothing is connected unless you choose to turn it on. You decide which apps, Gmail, Photos, Calendar, and Drive, can be used, and you can change those choices anytime.

There are also practical controls built in. You can start temporary chats, regenerate responses without personalization, or correct the AI when it gets your preferences wrong. In other words, you’re not locked into a single version of “you” that the system decides on its own.

On sensitive data, Google draws some firm boundaries. Things like license plates, inbox contents, and personal photos are not used to train models. Training is limited to select prompts and responses, and the system avoids making proactive assumptions around health or other sensitive topics.

As Google VP Josh Woodward has explained, the system isn’t trained to remember sensitive data, it’s trained to locate it only when you ask. That distinction doesn’t erase privacy concerns, but it does explain the design philosophy: retrieval, not surveillance.

Whether people fully trust that approach will take time. But the controls are there and more transparent than many expected.

Download: Ethical Prompting for Personalized AI SystemsLearn how to ask better questions when AI already knows you. Reduce bias, force transparency, and stay in control of AI-driven decisions, without limiting AI’s value.

Personal Intelligence isn’t just another checkbox in the AI race. It’s a strategic shift.

It puts Google head-to-head with:

Google’s advantage is simple and uncomfortable: it already has years of structured personal data tied to real behavior. It doesn’t need to guess who you are. It can reference who you’ve been.

Once AI understands context this deeply, the next step is obvious. Search turns into delegation. Assistance turns into orchestration. And AI stops waiting for instructions.

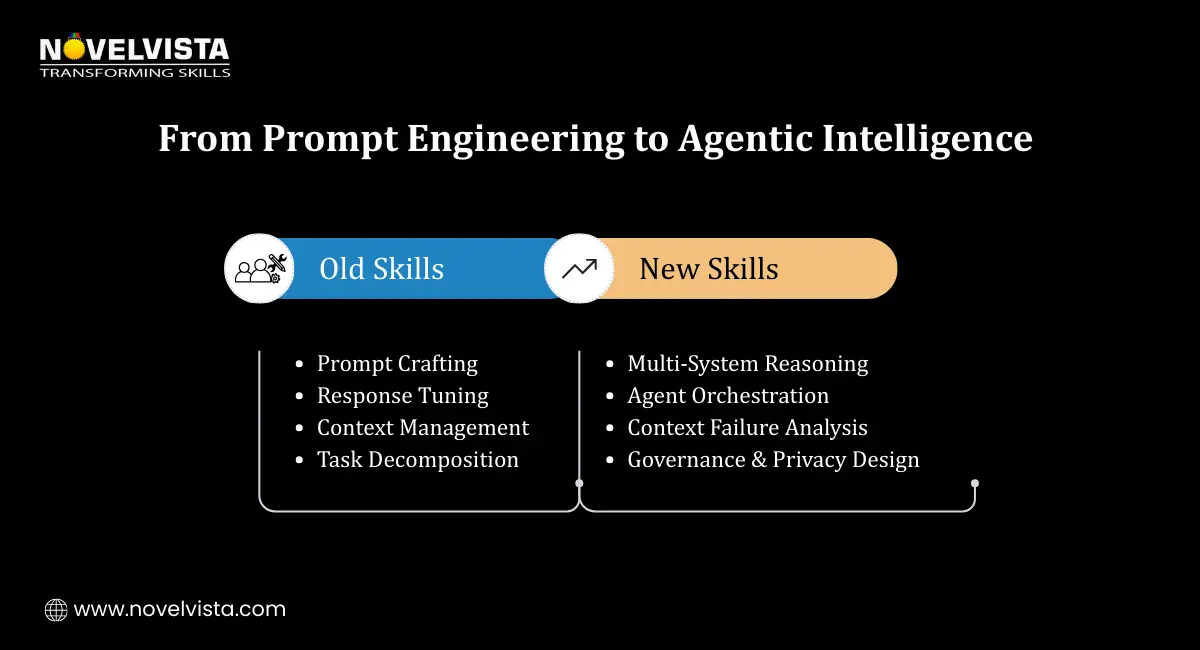

This shift quietly changes what it means to “work with AI.”

We’re moving away from systems that only respond to prompts and toward systems that:

For professionals, this raises the bar. Prompt skills still matter, but they’re no longer enough. You need to understand how AI reasons, where personalization can break, and how privacy, governance, and ethics shape real deployments.

This is what people mean when they talk about agentic AI, not smarter chatbots, but systems that act with context.

As AI becomes more personal and more autonomous, organizations will need people who understand more than just the surface layer.

That’s where focused learning matters.

The NovelVista Generative AI Professional Certification helps professionals understand how modern AI models reason, personalize, and fail in real environments. It’s designed for people who want to move beyond demos and understand real-world use cases, risks, and responsible deployment.

It’s a strong fit for product managers, developers, consultants, marketers, and AI strategists who work close to business decisions.

This Agentic AI certification goes deeper into how AI agents work, multi-step reasoning, tool interaction, safety controls, and governance. It’s directly relevant to systems like Personal Intelligence, where AI doesn’t just answer questions but connects data, tools, and actions.

Together, these skills prepare professionals for what AI is becoming, not just conversational, but operational.

Google’s Personal Intelligence marks a real turning point.

AI is no longer waiting for perfectly worded commands. It’s learning to understand context, habits, and constraints, and to act on them with minimal input. With that power comes responsibility, for the platforms building these systems and for the professionals shaping how they’re used.

The next phase of AI won’t be defined by who gives the smartest answers. It will be defined by who understands people best, without crossing the line.

And the people who truly understand generative and agentic AI will help decide where that line is drawn.

Author Details

Course Related To This blog

Generative AI Professional

Agentic AI Certification

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.