Category | AI And ML

Last Updated On 16/01/2026

Testing teams today are stretched thin. Release cycles are shorter, systems change frequently, and manual testing still consumes time on repetitive work. Generative AI in software testing steps in here by shifting QA from manual execution to intelligent automation driven by learning and context.

This guide explains how generative AI fits into real QA work, where it delivers value, and how teams can adopt it practically without breaking existing workflows.

Software testing is no longer just a final checkpoint before release. It now plays a continuous role in delivery speed, stability, and confidence. Traditional approaches struggle to keep up with modern CI/CD pipelines and frequent changes.

Generative AI in software testing introduces systems that can understand requirements, learn from test history, and generate meaningful tests automatically. This shift is strengthening generative AI in quality assurance by reducing repetitive effort and allowing testers to focus on judgment, risk, and user impact rather than mechanical execution.

At its core, generative AI in quality assurance uses deep learning and natural language processing to understand intent rather than just instructions. Instead of relying only on scripts, AI learns from requirements, defects, logs, and outcomes.

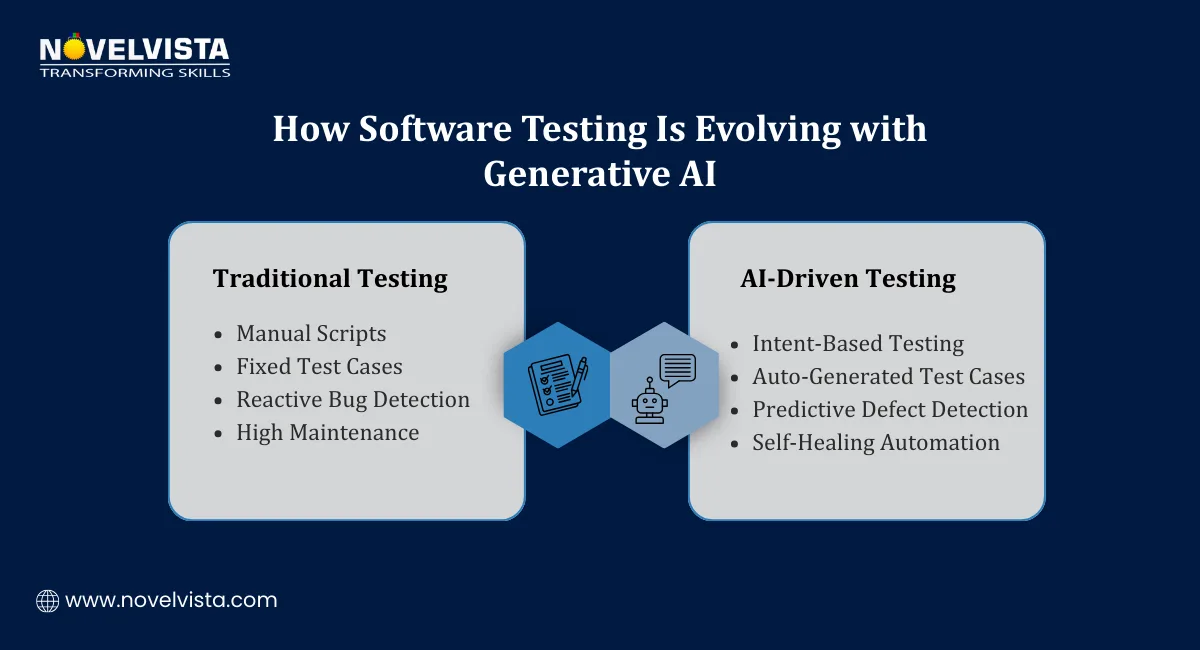

Key changes QA teams experience include:

Moving from script-heavy automation to intent-driven testing

Reducing brittle test scripts through adaptive, self-healing logic

Using execution data to guide smarter test decisions

AI supports testers by handling scale and speed, while humans continue to apply business understanding and judgment.

Teams adopt generative AI in software testing because it delivers clear, measurable improvements across the testing lifecycle.

Faster test creation and execution cycles: Generative AI can produce large sets of relevant test cases directly from requirements or code changes, dramatically reducing manual effort and helping teams keep pace with rapid development cycles.

Improved test coverage, including edge and negative scenarios: By analyzing behavior patterns and historical defects, AI explores edge cases and uncommon paths that manual testers often skip due to time constraints or limited visibility.

Reduced test maintenance through self-healing scripts: When UI elements or workflows change, AI-based tests automatically adapt by identifying equivalent elements or paths, minimizing broken tests and ongoing maintenance effort.

Early detection of anomalies across large datasets: Generative models analyze logs, metrics, and execution results at scale to identify abnormal patterns early, helping teams address issues before they impact users.

More time for strategic and exploratory testing: By automating repetitive validation tasks, AI frees testers to focus on exploratory testing, usability checks, and risk-based analysis where human insight matters most.

These benefits explain why generative AI in software testing is being treated as a long-term capability, not a temporary trend.

Real-world generative AI use cases in software testing focus on solving daily QA challenges rather than experimenting with technology for its own sake.

Automatic test case generation from requirements or code: AI reads user stories, acceptance criteria, or source code changes and generates relevant functional, regression, and negative test cases aligned with real application behavior.

Synthetic test data creation for privacy-safe testing: Generative models create realistic datasets that reflect production behavior while masking sensitive information, enabling safe testing in regulated or privacy-sensitive environments.

Visual and performance testing across environments: AI compares UI layouts, responsiveness, and performance metrics across devices, browsers, and loads, identifying inconsistencies that manual checks would struggle to catch.

Predictive risk analysis using historical execution data: By studying past defects and test failures, AI predicts which modules are most likely to break, helping teams prioritize testing where it matters most.

Smarter regression testing based on change impact: Instead of running full regression suites every time, AI selects and prioritizes tests based on what actually changed, improving speed without sacrificing confidence.

These generative AI use cases in software testing directly support faster, safer releases.

Teams often ask how to use generative AI for QA testing without disrupting existing tools or processes. Practical adoption usually follows a gradual, controlled approach.

Feed requirements or acceptance criteria into AI tools: Teams start by providing structured inputs like user stories or API specs, allowing AI to understand intent and generate meaningful test scenarios automatically.

Review and refine AI-generated test cases: Testers validate relevance, remove noise, and add business context, ensuring AI output aligns with real-world expectations and quality standards.

Integrate AI-based tests into CI/CD pipelines: Generated tests are executed automatically during builds and deployments, supporting continuous validation without slowing delivery.

Run tests with analytics-driven feedback loops: Execution results are analyzed to highlight gaps, risks, and failure patterns, guiding future test creation and prioritization.

Continuously improve coverage using execution insights: AI models learn from outcomes over time, making test generation smarter and more focused with each release cycle.

Understanding how to use generative AI for QA testing is about blending automation efficiency with tester expertise, not replacing one with the other.

One of the most practical gains from AI adoption comes from generative AI for bug fixing. Instead of reacting late to failures, teams can now analyze issues earlier and with better context.

Automated root cause analysis using logs and failure patterns: Generative AI scans logs, traces, and error patterns across environments to identify likely root causes faster, reducing guesswork and shortening investigation time for complex defects.

Generating defect summaries and reproduction steps: AI creates clear defect descriptions, impact summaries, and reproducible steps by analyzing failed executions, helping developers understand issues without lengthy back-and-forth discussions.

Identifying high-risk areas before production issues occur: By learning from past defects and system behavior, AI highlights modules that show early warning signs, allowing teams to fix issues before they become production incidents.

Visual health dashboards for early warning signals: AI-powered dashboards surface trends in failures, flaky tests, and performance degradation, giving QA and Dev teams visibility into system health at a glance.

This is why generative AI for bug fixing is becoming a core capability, not just a supporting feature.

Several modern testing platforms now embed AI capabilities directly into QA workflows. These tools focus on usability rather than forcing teams to become AI experts.

Common capabilities include:

Codeless test creation using natural language inputs: Testers describe scenarios in plain language, and AI converts them into executable tests, reducing dependency on scripting skills.

Self-healing automation and maintenance reduction: AI automatically updates locators and flows when applications change, keeping test suites stable across frequent releases.

Analytics and insight-driven testing: Execution data is analyzed to reveal gaps, risk areas, and optimization opportunities instead of relying only on pass/fail results.

DevOps and CI/CD integration: AI-based testing tools integrate with pipelines to support continuous testing without adding friction.

When choosing tools, teams should focus on scalability, integration ease, and alignment with their QA maturity, not just AI labels.

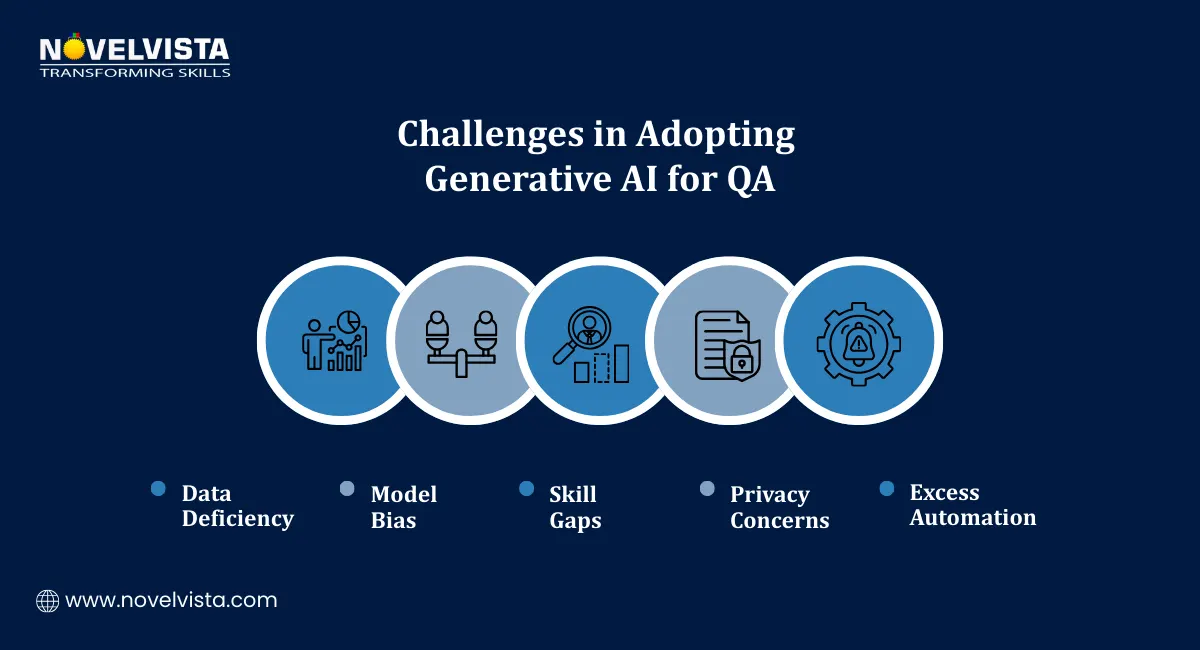

While generative AI in quality assurance offers strong benefits, adoption comes with real challenges that teams must address honestly.

Dependency on high-quality training data: AI systems learn from existing data, so poor test history or inconsistent documentation can lead to weak or misleading outputs.

Risk of biased or inaccurate test generation: Without human review, AI may over-focus on common paths and miss business-critical scenarios, making validation essential.

Skill gaps within QA teams: Testers need time and training to understand AI-driven workflows, interpretation of results, and governance controls.

Ethical and privacy concerns: Using real production data for training requires strong safeguards to avoid data leakage and compliance issues.

Need for hybrid testing models: The best results come when AI automation and human expertise work together, not when one replaces the other.

Looking ahead, generative AI in software testing will continue evolving toward deeper integration and smarter decision-making.

Natural language–driven test creation will become more accurate and business-focused

AI will integrate more tightly with CI/CD and DevOps pipelines

Cross-platform and cross-device intelligence will improve test consistency

Explainable AI models will gain importance for trust and governance

These trends will further strengthen generative AI in quality assurance across complex software ecosystems.

To get consistent value, teams need disciplined usage rather than rushed adoption.

Combine AI automation with human expertise: Let AI handle scale and repetition while testers apply judgment, domain knowledge, and exploratory thinking.

Track meaningful metrics: Measure coverage improvement, defect leakage, false positives, and risk reduction instead of only execution speed.

Continuously retrain models: Keep AI learning from new releases, defects, and system behavior to maintain relevance and accuracy.

Start small and scale gradually: Begin with one application or testing layer, validate outcomes, then expand based on measurable success.

Treat AI as an enabler, not a replacement: The goal is stronger quality outcomes, not removing human responsibility.

Generative AI in software testing is clearly reshaping how teams approach quality. It improves speed, expands coverage, uncovers risks earlier, and delivers insights that manual approaches struggle to match. When applied responsibly, it strengthens generative AI in quality assurance by allowing testers to focus on judgment, creativity, and real user impact.

Teams that adopt AI gradually, with clear goals and strong governance, are best positioned to benefit from this shift.

Next Step: Build Practical AI Testing Skills

If you want to move beyond theory and apply AI confidently in real projects, NovelVista’s Generative AI in Software Development Certification Training and Generative AI Professional certification training Course are the next strong step. The program helps professionals understand AI concepts, practical use cases, tooling, and governance, with hands-on guidance that connects AI capabilities directly to software quality and delivery outcomes.

Author Details

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.